[Konrad Dybcio] tells about his journey booting Linux on A7/8/8X processors, playing around with an old iPhone 5 he’s got in a drawer. It’s been a two-year “revisit every now and then” journey, motivationally fueled by the things like Linux on M1 Macs announcement. In the end, what we have here is a way to boot mainline Linux on a few less-than-modern but still very usable iPhones, and a fun story about getting there.

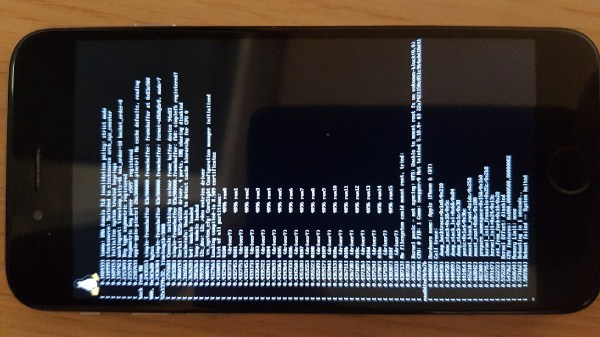

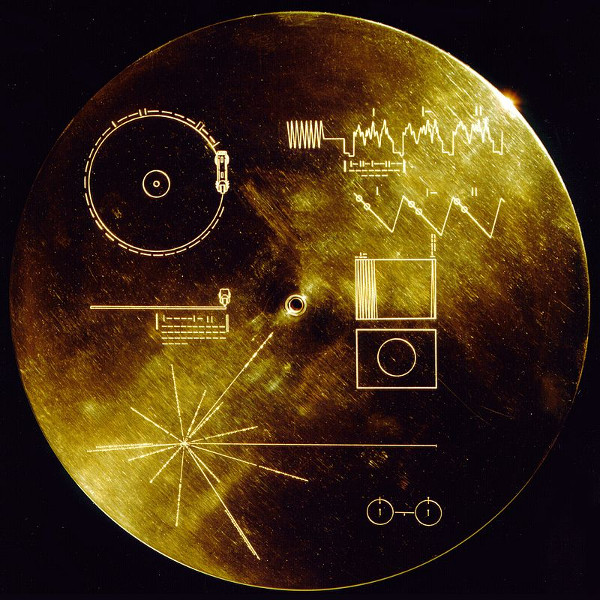

[Konrad]’s work is based on the Sandcastle project research, but he couldn’t quite figure out how to make their code work, and had to make sense of it as he went. At some point, he got stuck on enabling the MMU, which was the main roadblock for a while. Joined by another developer intrigued by Apple hardware, they were hacking away at it, developing tools and neat tricks on their way, but to no avail. With the framebuffer accessible and no other decent debugging methods in sight, he tells about a code snippet they wrote that printed register values as valid barcodes Continue reading “Boot Mainline Linux On Apple A7, A8 And A8X Devices”