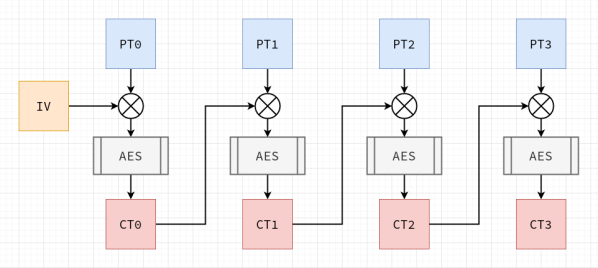

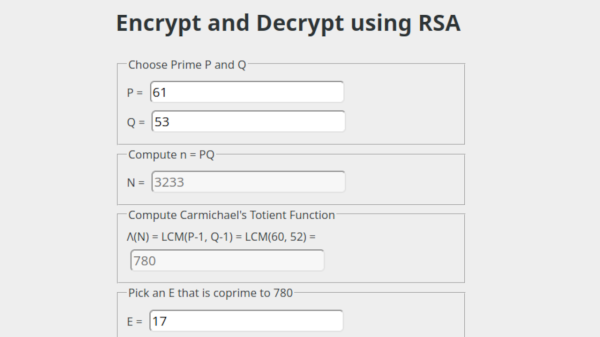

Software developers are usually told to ‘never write your own cryptography’, and there definitely are sufficient examples to be found in the past decades of cases where DIY crypto routines caused real damage. This is also the introduction to [Francis Stokes]’s article on rolling your own crypto system. Even if you understand the mathematics behind a cryptographic system like AES (symmetric encryption), assumptions made by your code, along with side-channel and many other types of attacks, can nullify your efforts.

So then why write an article on doing exactly what you’re told not to do? This is contained in the often forgotten addendum to ‘don’t roll your own crypto’, which is ‘for anything important’. [Francis]’s tutorial on how to implement AES is incredibly informative as an introduction to symmetric key cryptography for software developers, and demonstrates a number of obvious weaknesses users of an AES library may not be aware of.

This then shows the reason why any developer who uses cryptography in some fashion for anything should absolutely roll their own crypto: to take a peek inside what is usually a library’s black box, and to better understand how the mathematical principles behind AES are translated into a real-world system. Additionally it may be very instructive if your goal is to become a security researcher whose day job is to find the flaws in these systems.

Essentially: definitely do try this at home, just keep your DIY crypto away from production servers :)