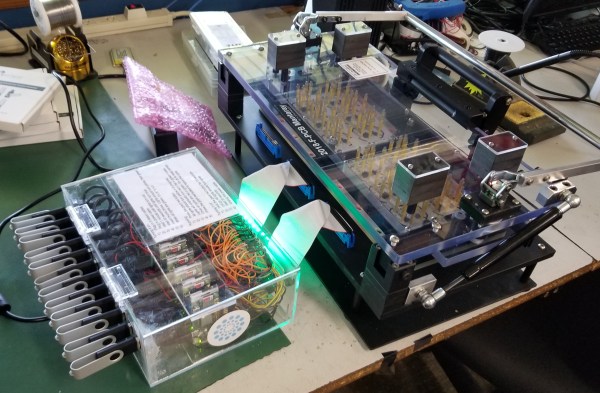

Assembly lines for electronics products are complicated beasts, often composed of many custom tools and fixtures. Typically a microcontroller must be programmed with firmware, and the circuit board tested before assembly into the enclosure, followed by functional testing afterwards before putting it in a box. These test platforms can be very expensive, easily into the tens of thousands of dollars. Instead, this project uses a set of 12 Raspberry Pi Zero Ws in parallel to program, test, and configure up to 12 units at once before moving on to the next stage in assembly.

Day: December 30, 2019

Image Sensor From Discrete Parts Delivers Glorious 1-Kilopixel Images

Chances are pretty good that you have at least one digital image sensor somewhere close to you at this moment, likely within arm’s reach. The ubiquity of digital cameras is due to how cheap these sensors have become, and how easy they are to integrate into all sorts of devices. So why in the world would someone want to build an image sensor from discrete parts that’s 12,000 times worse than the average smartphone camera? Because, why not?

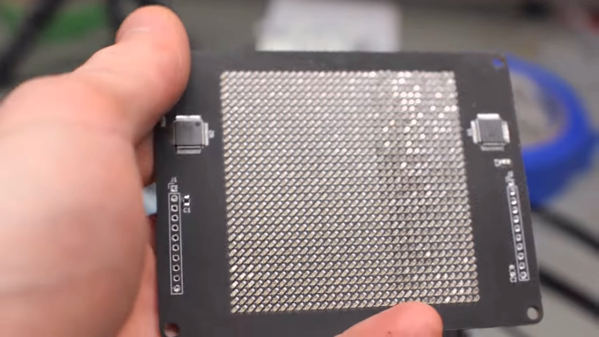

[Sean Hodgins] originally started this project as a digital pinhole camera, which is why it was called “digiObscura.” The idea was to build a 32×32 array of photosensors and focus light on it using only a pinhole, but that proved optically difficult as the small aperture greatly reduced the amount of light striking the array. The sensor, though, is where the interesting stuff is. [Sean] soldered 1,024 ALS-PT19 surface-mount phototransistors to the custom PCB along with two 32-bit analog multiplexers. The multiplexers are driven by a microcontroller to select each pixel in turn, one row and one column at a time. It takes a full five seconds to scan the array, so taking a picture hearkens back to the long exposures common in the early days of photography. And sure, it’s only a 1-kilopixel image, but it works.

[Sean] has had this project cooking for a while – in fact, the multiplexers he used for the camera came up as a separate project back in 2018. We’re glad to see that he got the rest built, even with the recycled lens he used. One wonders how a 3D-printed lens would work in front of that sensor.

Continue reading “Image Sensor From Discrete Parts Delivers Glorious 1-Kilopixel Images”

VGA Signal In A Browser Window, Thanks To Reverse Engineering

[Ben Cox] found some interesting USB devices on eBay. The Epiphan VGA2USB LR accepts VGA video on one end and presents it as a USB webcam-like video signal on the other. Never have to haul a VGA monitor out again? Sounds good to us! The devices are old and abandoned hardware, but they do claim Linux support, so one BUY button mash later and [Ben] was waiting patiently for them in the mail.

But when they did arrive, the devices didn’t enumerate as a USB UVC video device as expected. The vendor has a custom driver, support for which ended in Linux 4.9 — meaning none of [Ben]’s machines would run it. By now [Ben] was curious about how all this worked and began digging, aiming to create a userspace driver for the device. He was successful, and with his usual detail [Ben] explains not only the process he followed to troubleshoot the problem but also how these devices (and his driver) work. Skip to the end of the project page for the summary, but the whole thing is worth a read.

The resulting driver is not optimized, but will do about 7 fps. [Ben] even rigged up a small web server inside the driver to present a simple interface for the video in a pinch. It can even record its output to a video file, which is awfully handy. The code is available on his GitHub repository, so give it a look and maybe head to eBay for a bit of bargain-hunting of your own.