For many decades humankind has entertained the notion that we can maybe tweak the Earth’s atmosphere or biosphere in such a way that we can for example undo the harms of climate change, or otherwise affect the climate for our own benefit. This often involves spreading certain substances in parts of the atmosphere in order to reflect or retain thermal solar radiation or induce rain.

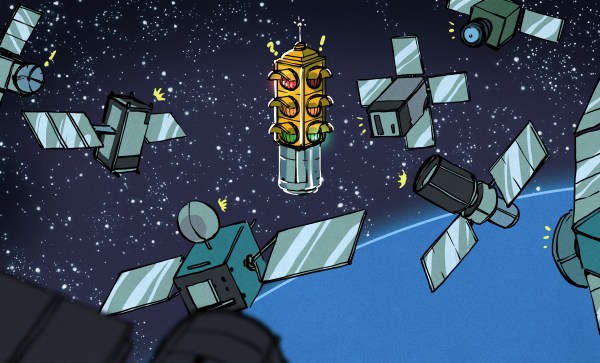

Yet despite how limited in scope these attempts at such intentional experiments have been so far – with most proposals dying somewhere before being implemented – we have already embarked on a potentially planet-wide atmospheric reconfiguration that could affect life on Earth for centuries to come. This accidental experiment comes in the form of rocket stages, discarded satellites, and other human-made space litter that burn up in the atmosphere at ever increasing rates.

Rather than burning up cleanly into harmless components, this actually introduces metals and other compounds into the upper parts of the atmosphere. What the long-term effects of this will be is still uncertain, but with the most dire scenarios involving significant climate change and ozone layer degradation, we ought to figure this one out sooner rather than later.

Continue reading “Accidental Climate Engineering With Disintegrating Satellites”