Starting an open source project is easy: write some code, pick a compatible license, and push it up to GitHub. Extra points awarded if you came up with a clever logo and remembered to actually document what the project is supposed to do. But maintaining a large open source project and keeping its community happy while continuing to evolve and stay on the cutting edge is another story entirely.

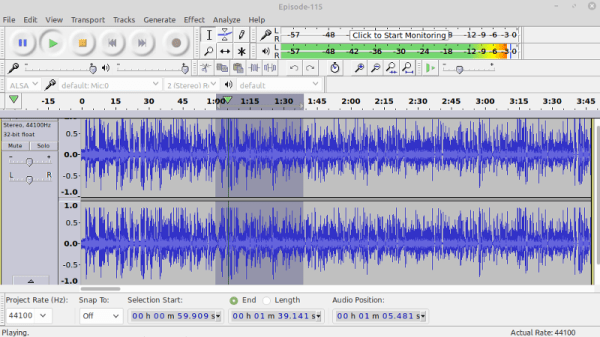

Just ask the maintainers of Audacity. The GPLv2 licensed multi-platform audio editor has been providing a powerful and easy to use set of tools for amateurs and professionals alike since 1999, and is used daily by…well, it’s hard to say. Millions, tens of millions? Nobody really knows how many people are using this particular tool and on what platforms, so it’s not hard to see why a pull request was recently proposed which would bake analytics into the software in an effort to start answering some of these core questions.

Now, the sort of folks who believe that software should be free as in speech tend to be a prickly bunch. They hold privacy in high regard, and any talk of monitoring their activity is always going to be met with strong resistance. Sure enough, the comments for this particular pull request went south quickly. The accusations started flying, and it didn’t take long before the F-word started getting bandied around: fork. If Audacity was going to start snooping on its users, they argued, then it was time to take the source and spin it off into a new project free of such monitoring.

Now, the sort of folks who believe that software should be free as in speech tend to be a prickly bunch. They hold privacy in high regard, and any talk of monitoring their activity is always going to be met with strong resistance. Sure enough, the comments for this particular pull request went south quickly. The accusations started flying, and it didn’t take long before the F-word started getting bandied around: fork. If Audacity was going to start snooping on its users, they argued, then it was time to take the source and spin it off into a new project free of such monitoring.

The situation may sound dire, but truth be told, it’s a common enough occurrence in the world of free and open source software (FOSS) development. You’d be hard pressed to find any large FOSS project that hasn’t been threatened with a fork or two when a subset of its users didn’t like the direction they felt things were moving in, and arguably, that’s exactly how the system is supposed to work. Under normal circumstances, you could just chalk this one up to Raymond’s Bazaar at work.

But this time, things were a bit more complicated. Proposing such large and sweeping changes with no warning showed a troubling lack of transparency, and some of the decisions on how to implement this new telemetry system were downright concerning. Combined with the fact that the pull request was made just days after it was announced that Audacity was to be brought under new management, there was plenty of reason to sound the alarm.

Continue reading “Telemetry Debate Rocks Audacity Community In Open Source Dustup”