We’ve probably all seen some old newsreel or documentary from The Before Times where the narrator, using his best Mid-Atlantic accent, described those newfangled computers as “thinking machines,” or better yet, “electronic brains.” It was an apt description, at least considering that the intended audience had no other frame of reference at a time when the most complex machine they were familiar with was a telephone. But what if the whole “brain” thing could be taken more literally? We’ll have to figure that out soon if these computers powered by miniature human brains end up getting any traction.

neuron13 Articles

3D Printing Functional Human Brain Tissue For Research Purposes

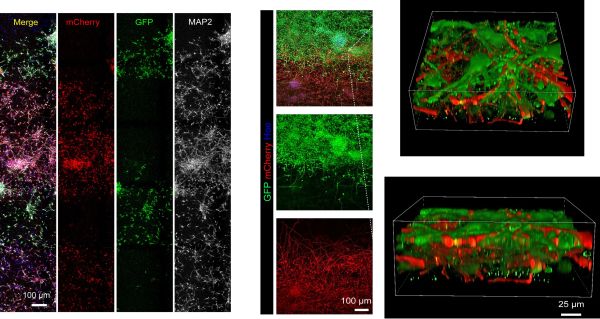

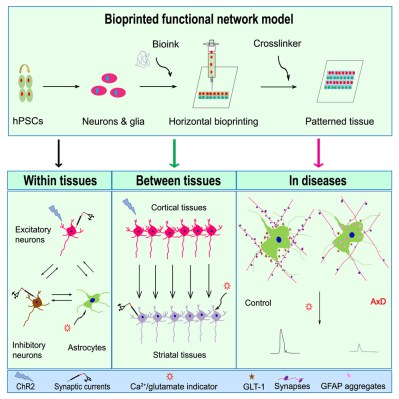

The brain is probably the least explored organ, much of which is due to the difficulty of studying it in situ rather than in slices under a microscope. Even growing small organoids out of neurons provide few clues, as this is not how brain tissue is normally organized. A possible breakthrough may have been found here by a group of researchers whose article in Cell Stem Cell details how they created functional human neural tissues using a commercial 3D bioprinter.

As detailed by [Yuanwei Yan] and colleagues in their research article, the issue with previous approaches was that although these would print layers of neurons, they would fail to integrate as in the brain. In the brain’s tissues, we see a wide variety of neurons and supportive cells, all of which integrate in a specific way to form functioning neuron-to-neuron and neuron-to-glial connections with expected neural activity. The accomplishment of this research team is 3D bioprinting of neural tissues with the necessary functional connections.

Continue reading “3D Printing Functional Human Brain Tissue For Research Purposes”

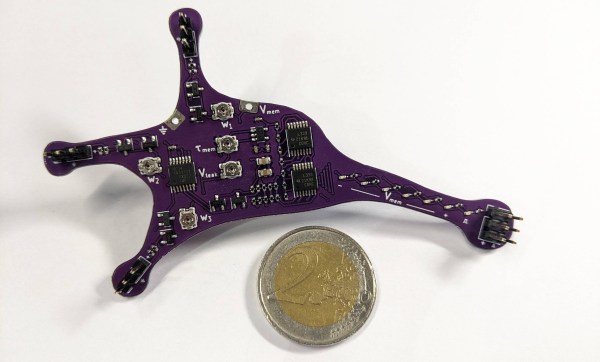

Hackaday Prize 2023: Explore The Basics Of Neuroscience With This Electronic Neuron

Brains are the most complex systems in the universe, but their basic building blocks are surprisingly simple — the complexity arises from billions of neurons, axons and synapses working together. Simulating an entire brain therefore requires vast computing resources, but if it’s just a few cells you’re interested in, you don’t need much: a handful of op-amps and transistors will do the job, as [Sebastian Billaudelle] has demonstrated. He has designed an electronic neuron called Lu.i that does everything a real neuron does, in a convenient package suitable for educational use.

[Sebastian]’s neuron implements what’s known as the leaky integrate-and-fire model, first proposed by [Louis Lapicque] as a simple model for a neuron’s behavior. Basically, the neuron acts as an integrator that stores all incoming charge in a capacitor and generates a spiky output signal once its voltage reaches a certain threshold level. The capacitor is slowly discharged however, which means the neuron will only “fire” when it gets a strong enough input signal.

A couple of MCP6004 op-amps implement this model, with an LM339 comparator acting as the threshold detector. The neuron’s inputs are generated by electronic synapses made from logic-level MOSFETS. These circuits route signals between different neurons and can be manually set to either source or sink current, thereby increasing or decreasing the neuron’s voltage level.

A couple of MCP6004 op-amps implement this model, with an LM339 comparator acting as the threshold detector. The neuron’s inputs are generated by electronic synapses made from logic-level MOSFETS. These circuits route signals between different neurons and can be manually set to either source or sink current, thereby increasing or decreasing the neuron’s voltage level.

All of this is built onto a neat purple PCB in the shape of a nerve cell, with external connections on the tips of its dendrites. The neuron’s internal state is made visible by an LED bar graph, giving the user an immediate feel for what’s going on inside the network. Multiple neurons can be connected together to form reasonably complex networks that can implement things like oscillators or logic functions, examples of which are shown on the project’s GitHub page.

The Lu.i project is a great way to teach the basics of neuroscience, turning dry differential equations into a neat display of signals racing around a network. Neurons are fascinating things that we’re learning more about every day, enabling things like brain-computer interfaces and neuromorphic computing.

MRI Resolution Progresses From Millimeters To Microns

Neuroscientists have been mapping and recreating the nervous systems and brains of various animals since the microscope was invented, and have even been able to map out entire brain structures thanks to other imaging techniques with perhaps the most famous example being the 302-neuron brain of a roundworm. Studies like these advanced neuroscience considerably but even better imaging technology is needed to study more advanced neural structures like those found in a mouse or human, and this advanced MRI machine may be just the thing to help gain better understandings of these structures.

A research team led by Duke University developed this new MRI technology using an incredibly powerful 9.4 Tesla magnet and specialized gradient coils, leading to an image resolution an impressive six orders of magnitude higher than a typical MRI. The voxels in the image measure at only 5 microns compared to the millimeter-level resolution available on modern MRI machines, which can reveal microscopic details within brain tissues that were previously unattainable. This breakthrough in MRI resolution has the potential to significantly advance understanding of the neural networks found in humans by first studying neural structures in mice at this unprecedented detail.

The researchers are hopeful that this higher-powered MRI microscope will lead to new insights and translate directly into advancements healthcare, and presuming that it can be replicated, used on humans safely, and becomes affordable, we would expect it to find its way into medical centers as soon as possible. Not only that, but research into neuroscience has plenty of applications outside of healthcare too, like the aforementioned 302-neuron brain of the Caenorhabditis elegans roundworm which has been put to work in various robotics platforms to great effect.

Continue reading “MRI Resolution Progresses From Millimeters To Microns”

DIY Neuroscience Hack Chat

Join us on Wednesday, February 24 at noon Pacific for the DIY Neuroscience Hack Chat with Timothy Marzullo!

Watch a film about a mad scientist from the golden age of Hollywood and chances are good that among the other set pieces, you’ll see human brains floating in jars of cloudy fluid wired up to electrodes and fancy machines. It’s all made up, of course, but tropes work because they’re based on a kernel of truth, and we in the audience know that our brains and the other parts of our nervous system do indeed work on electricity. Or more precisely, excitable tissues in our nervous systems pass electrochemical signals between themselves as waves of potential across cell membranes.

Studying this electrical world locked away inside our heads is a challenging, but by no means impossible, pursuit. Usable signals can be picked up, amplified, digitized, and recorded to help us understand what’s going on when we think, feel, move, sleep, wake, or just be. Neuroscience has made tremendous strides looking at these signals, but the equipment to do so has largely remained the province of large universities and teaching hospitals with ample budgets, leaving the amateur neuroscientist out of luck.

Tim Marzullo, co-founder of Backyard Brains, is looking to change all that. While working on his Ph.D. in neuroscience at the University of Michigan, he and Greg Gage looked for ways to make the tools of neuroscience research affordable to everyone. The result is the Neuron SpikerBox, a low-cost bioamplifier that can tap into the “spikes” of action potential in live neurons. Open-source tools like these have helped educators bring neuroscience experiments to STEM students, and even helped other scientists set up novel, low-cost experiments.

Tim will join us on the Hack Chat to talk about doing DIY neuroscience and designing the instruments that make it possible. Bring your “mad scientist” questions as we push back the veil of ignorance on how our brains work, one neuron at a time.

Our Hack Chats are live community events in the Hackaday.io Hack Chat group messaging. This week we’ll be sitting down on Wednesday, February 24 at 12:00 PM Pacific time (UTC-8). If time zones have you tied up, we have a handy time zone converter.

Our Hack Chats are live community events in the Hackaday.io Hack Chat group messaging. This week we’ll be sitting down on Wednesday, February 24 at 12:00 PM Pacific time (UTC-8). If time zones have you tied up, we have a handy time zone converter.

Click that speech bubble to the right, and you’ll be taken directly to the Hack Chat group on Hackaday.io. You don’t have to wait until Wednesday; join whenever you want and you can see what the community is talking about.

Open-Source Neuroscience Hardware Hack Chat

Join us on Wednesday, February 19 at noon Pacific for the Open-Source Neuroscience Hardware Hack Chat with Dr. Alexxai Kravitz and Dr. Mark Laubach!

There was a time when our planet still held mysteries, and pith-helmeted or fur-wrapped explorers could sally forth and boldly explore strange places for what they were convinced was the first time. But with every mountain climbed, every depth plunged, and every desert crossed, fewer and fewer places remained to be explored, until today there’s really nothing left to discover.

Unless, of course, you look inward to the most wonderfully complex structure ever found: the brain. In humans, the 86 billion neurons contained within our skulls make trillions of connections with each other, weaving the unfathomably intricate pattern of electrochemical circuits that make you, you. Wonders abound there, and anyone seeing something new in the space between our ears really is laying eyes on it for the first time.

But the brain is a difficult place to explore, and specialized tools are needed to learn its secrets. Lex Kravitz, from Washington University, and Mark Laubach, from American University, are neuroscientists who’ve learned that sometimes you have to invent the tools of the trade on the fly. While exploring topics as wide-ranging as obesity, addiction, executive control, and decision making, they’ve come up with everything from simple jigs for brain sectioning to full feeding systems for rodent cages. They incorporate microcontrollers, IoT, and tons of 3D-printing to build what they need to get the job done, and they share these designs on OpenBehavior, a collaborative space for the open-source neuroscience community.

Join us for the Open-Source Neuroscience Hardware Hack Chat this week where we’ll discuss the exploration of the real final frontier, and find out what it takes to invent the tools before you get to use them.

Our Hack Chats are live community events in the Hackaday.io Hack Chat group messaging. This week we’ll be sitting down on Wednesday, February 19 at 12:00 PM Pacific time. If time zones have got you down, we have a handy time zone converter.

Our Hack Chats are live community events in the Hackaday.io Hack Chat group messaging. This week we’ll be sitting down on Wednesday, February 19 at 12:00 PM Pacific time. If time zones have got you down, we have a handy time zone converter.

Click that speech bubble to the right, and you’ll be taken directly to the Hack Chat group on Hackaday.io. You don’t have to wait until Wednesday; join whenever you want and you can see what the community is talking about. Continue reading “Open-Source Neuroscience Hardware Hack Chat”

Brain Cell Electronics Explains Wetware Computing Power

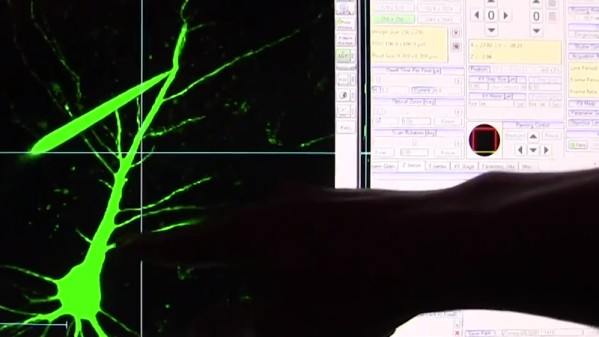

Neural networks use electronic analogs of the neurons in our brains. But it doesn’t seem likely that just making enough electronic neurons would create a human-brain-like thinking machine. Consider that animal brains are sometimes larger than ours — a sperm whale’s brain weighs 17 pounds — yet we don’t think they are as smart as humans or even dogs who have a much smaller brain. MIT researchers have discovered differences between human brain cells and animal ones that might help clear up some of that mystery. You can see a video about the work they’ve done below.

Neurons have long finger-like structures known as dendrites. These act like comparators, taking input from other neurons and firing if the inputs exceed a threshold. Like any kind of conductor, the longer the dendrite, the weaker the signal. Naively, this seems bad for humans. To understand why, consider a rat. A rat’s cortex has six layers, just like ours. However, whereas the rat’s brain is tiny and 30% cortex, our brains are much larger and 75% cortex. So a dendrite reaching from layer 5 to layer 1 has to be much longer than the analogous neuron in the rat’s brain.

These longer dendrites do lead to more loss in human brains and the MIT study confirmed this by using human brain cells — healthy ones removed to get access to diseased brain cells during surgery. The researchers think that this greater loss, however, is actually a benefit to humans because it helps isolate neurons from other neurons leading to increased computing capability of a single neuron. One of the researchers called this “electrical compartmentalization.” Dig into the conclusions found in the research paper.

We couldn’t help but wonder if this research would offer new insights into neural network computing. We already use numeric weights to simulate dendrite threshold action, so presumably learning algorithms are making weaker links if that helps. However, maybe something to take away from this is that less interaction between neurons and groups of neurons may be more helpful than more interaction.

Watching them probe neurons under the microscope reminded us of probing on an IC die. There’s a close tie between understanding the brain and building better machines so we try to keep an eye on the research going on in that area.

Continue reading “Brain Cell Electronics Explains Wetware Computing Power”