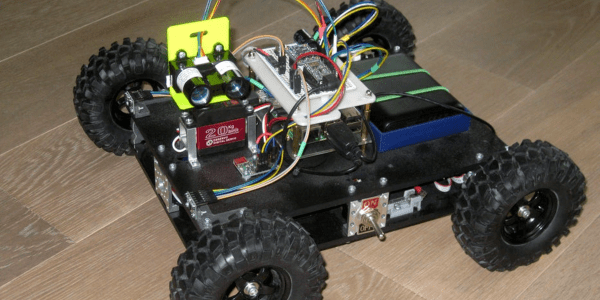

Computer engineering student [sherwin-dc] had a rover project which required streaming video through an ESP32 to be accessed by a web server. He couldn’t find documentation for the standard camera interface of the ESP32, but even if he had it, that approach used too many I/O pins. Instead, [sherwin-dc] decided to shoe-horn a video into an I2S stream. It helped that he had access to an Altera MAX 10 FPGA to process the video signal from the camera. He did succeed, but it took a lot of experimenting to work around the limited resources of the ESP32. Ultimately [sherwin-dc] decided on QVGA resolution of 320×240 pixels, with 8 bits per pixel. This meant each frame uses just 77 KB of precious ESP32 RAM.

His design uses a 2.5 MHz SCK, which equates to about four frames per second. But he notes that with higher SCK rates in the tens of MHz, the frame rate could be significantly higher — in theory. But considering other system processing, the ESP32 can’t even keep up with four FPS. In the end, he was lucky to get 0.5 FPS throughput, but that was adequate for purposes of controlling the rover (see animated GIF below the break). That said, if you had a more powerful processor in your design, this technique might be of interest. [Sherwin-dc] notes that the standard camera drivers for the ESP32 use I2S under the hood, so the concept isn’t crazy.

We’ve covered several articles about generating video over I2S before, including this piece from back in 2019. Have you ever commandeered a protocol for “off-label” use?

[Rick], an Adafruit learning system contributor, is excited by the implications of STEM’s reach into K-12 education. He was inspired to design

[Rick], an Adafruit learning system contributor, is excited by the implications of STEM’s reach into K-12 education. He was inspired to design