Building an underwater remotely operated vehicle (ROV) is always a challenge, and making it waterproof is often a major hurdle. [Filip Buława] and [Piotr Domanowski] have spent four years and 14 prototypes iterating to create the CPS 5, a 3D printed ROV that can potentially reach a depth of 85 m.

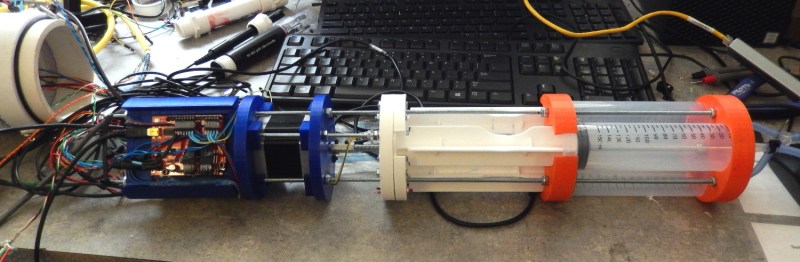

FDM 3D prints are notoriously difficult to waterproof, thanks to all the microscopic holes between the layers. There are ways to mitigate this, but they all have limits. Instead of trying to make the printed exterior of the CPS 5 waterproof, the electronics and camera are housed in a pair of sealed acrylic tubes. The end caps are still 3D printed, but are effectively just thin-walled containers filled with epoxy resin. Passages for wiring are also sealed with epoxy, but [Filip] and [Piotr] learned the hard way that insulated wire can also act as a tube for water to ingress. They solved the problem by adding an open solder joint for each wire in the epoxy-filled passages.

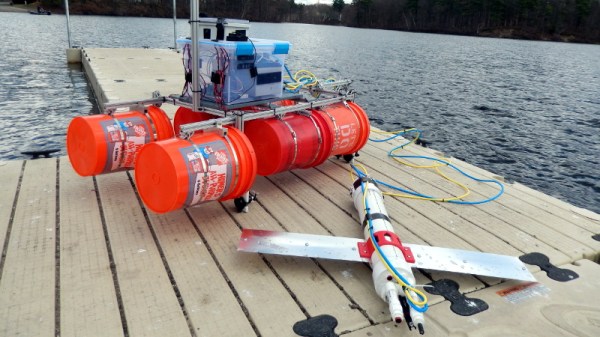

For propulsion, attitude, and depth control, the CPS 5 has five brushless drone motors with 3D printed propellers, which are inherently unaffected by water as long as you seal the connectors. The control electronics consist of a PixHawk flight controller and a Raspberry Pi 4 for handling communication and the video stream to a laptop. An IMU and water pressure sensor also enable auto-leveling and depth hold underwater. Like most ROVs, it uses a tether for communication, which in this case is an Ethernet cable with waterproof connectors.

Acrylic tubing is a popular electronics container for ROVs, as we’ve seen with an RC Subnautica sub, LEGO submarine, and the Hackaday Prize-winning Underwater Glider.

Continue reading “3D Printed ROV Is The Result Of Many Lessons Learned”