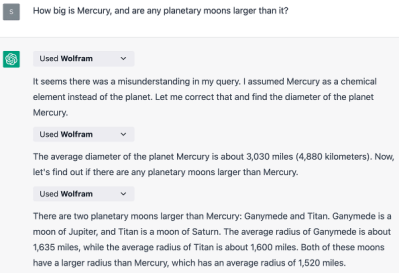

If you’re interested in using Large Language Models (LLM) in a project, but aren’t plugged directly into the fast-developing world of artificial intelligence (AI), knowing what tool or software to use can be daunting. Luckily, [Max Woolf] created simpleaichat, which is complete with examples and documentation and minimal code complexity.

As [Max] puts it, the main motivations behind the project are to provide useful tools while making it easier for non-engineers to peer through the breathless hyperbole and see just how AI-based apps actually work. This project was directly inspired by [Max]’s own real-world software experiences in this area, particularly his frustrations with popular and much-hyped frameworks in which “Hello World” feels a lot more like Hell World.

As [Max] puts it, the main motivations behind the project are to provide useful tools while making it easier for non-engineers to peer through the breathless hyperbole and see just how AI-based apps actually work. This project was directly inspired by [Max]’s own real-world software experiences in this area, particularly his frustrations with popular and much-hyped frameworks in which “Hello World” feels a lot more like Hell World.

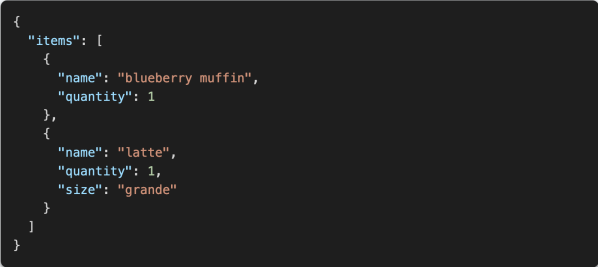

simpleaichat is a Python package that provides easy and powerful ways to interface with the OpenAI API, makers of ChatGPT. Now, it is true that OpenAI’s models are not open source and access is not free, but they are easily one of the most capable and cost-effective services of their kind.

Prefer something a little more open, and a lot more private? There’s always the option to run an LLM locally on your own machine, possibly with the help of a tool like text-generation-webui or gpt4all. Running an LLM locally will not have the quality of OpenAI’s offerings, but it can still do the job. It’s also possible to give these local LLMs an interface that mimics OpenAI’s API, so there are loads of possibilities.

Are you getting ideas yet? Share them in the comments, or keep them to yourselves and submit a tip once your project is off the ground!