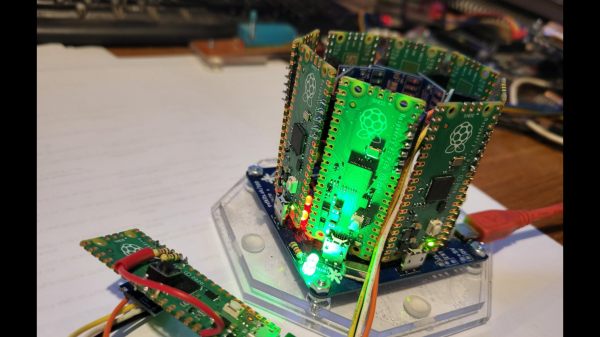

[ExtremeElectronics] cleverly demonstrates that if one Raspberry Pi Pico is good, then nine must be awesome. The PicoCray project connects multiple Raspberry Pi Pico microcontroller modules into a parallel architecture leveraging an I2C bus to communicate between nodes.

The same PicoCray code runs on all nodes, but a grounded pin on one of the Pico modules indicates that it is to operate as the controller node. All of the remaining nodes operate as processor nodes. Each processor node implements a random back-off technique to request an address from the controller on the shared bus. After waiting a random amount of time, a processor will check if the bus is being used. If the bus is in use, the processor will go back to waiting. If the bus is not in use, the processor can request an address from the controller.

Once a processor node has an address, it can be sent tasks from the controller node. In the example application, these tasks involve computing elements of the Mandelbrot Set. The particular elements to be computed in a given task are allocated by the controller node which then later collects the results from each processor node and aggregates the results for display.

The name for this project is inspired by Seymore Cray. Our Father of the Supercomputer biography tells his story including why the Cray-1 Supercomputer was referred to as “the world’s most expensive loveseat.” For even more Cray-1 inspiration, check out this Raspberry Pi Zero Cluster.

photopolymer resin, which is chemically tweaked to make it sensitive to the UV frequency photons. This is all fine, but as we know, this method is slow and can be of limited resolution, and has been largely superseded by LCD technology. Recent research has focussed on

photopolymer resin, which is chemically tweaked to make it sensitive to the UV frequency photons. This is all fine, but as we know, this method is slow and can be of limited resolution, and has been largely superseded by LCD technology. Recent research has focussed on