You’re at a concert, and a car filled with balloons is in a glass box. As you approach the box, vertical blinds close to block the view directly in front of you. You move left, more blinds close to block your view. The blinds follow your every move, ensuring you can’t get a close up view of the car inside. You’ve just met Blind Spot, an interactive art installation by [Brendan Matkin].

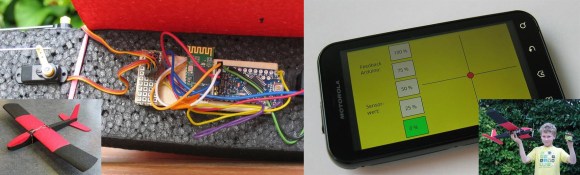

Blind Spot was presented at Breakerhead, an incredible arts and engineering event which takes place every September in Calgary, Canada. Blind Spot consists of a car inside a large wooden box. Windows allow a view into the box, though there are 96 vertical blinds just behind the glass. The vertical blinds are individually controlled by hobby servos. The servos are wired to six serial servo controllers, all of which are controlled by an Arduino.

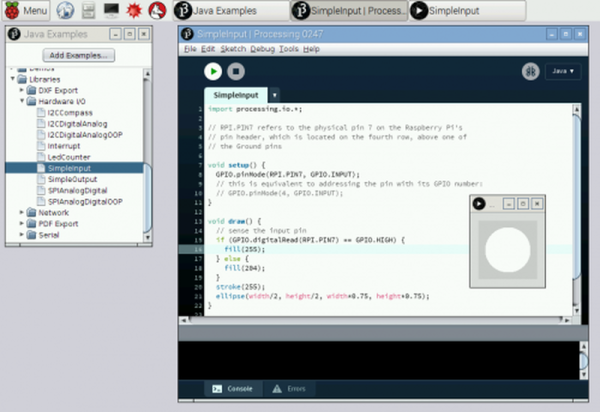

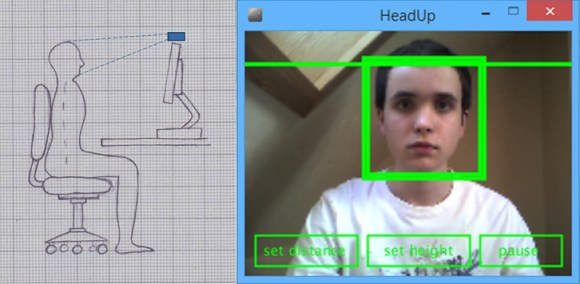

A PC serves as Blind Spot’s brain. For sensors, 6 wide-angle webcams connect to a standard Windows 7 machine. Running 6 webcams is not exactly a standard configuration. To handle this, [Brendan] switched the webcams to friendly names in the windows registry. The webcam images are read by a Processing sketch. The sketch scans the images and determines which of the 96 blinds to close. The code for Blind Spot is available on github.

Continue reading “Play Peek-A-Boo With Blind Spot” →