One of the reasons the IBM PC platform became the dominant standard for desktop PCs back in the mid-1980s was its open hardware design, based around what would later be called the ISA bus. Any manufacturer could design plug-in cards or even entire computers that were hardware and software compatible with the IBM PC. Although ISA has been obsolete for most purposes since the late 1990s, some ISA cards such as high-quality sound cards have become so popular among retrocomputing enthusiasts that they now fetch hundreds of dollars on eBay.

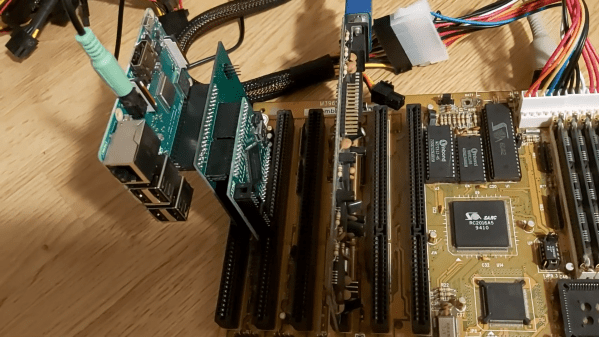

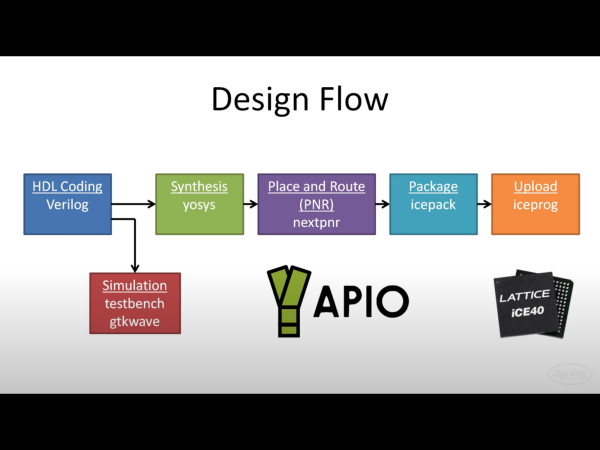

So what can you do if your favorite ISA card is not easily available? One option is to head over to [eigenco]’s GitHub page and check out his FrankenPiFPGA project. It contains a design for a simple ISA plug-in card that hooks up to a Cyclone IV FPGA and a Raspberry Pi. The FPGA connects to the ISA bus and implements its bus architecture, while the Pi communicates with the FPGA through its GPIO ports and emulates any card you want in software. [eigenco]’s current repository contains code for several sound cards as well as a hard drive and a serial mouse. The Pi’s multi-core architecture allows it to run several of these tasks at once while still keeping up the reasonably high data rate required by the ISA bus.

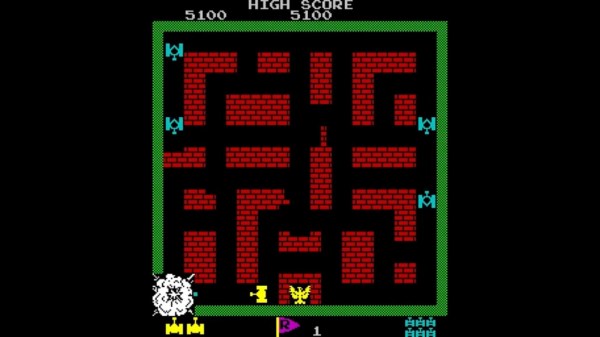

In the videos embedded below you can see [eigenco] demonstrating the system on a 386 motherboard that only has a VGA card to hook up a monitor. By emulating a hard drive and sound card on the Pi he is able to run a variety of classic DOS games with full sound effects and music. The sound cards currently supported include AdLib, 8-bit SoundBlaster, Gravis Ultrasound and Roland MT-32, but any card that’s documented well enough could be emulated.

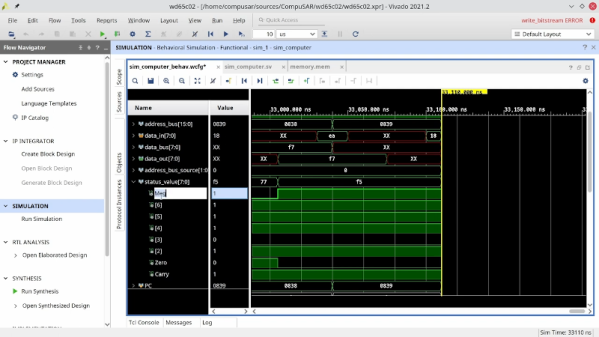

This approach could also come in handy to replace other unobtanium hardware, like rare CD-ROM interfaces. Of course, you could take the concept to its logical extreme and simply implement an entire PC in an FPGA.

Continue reading “Emulate Any ISA Card With A Raspberry Pi And An FPGA”