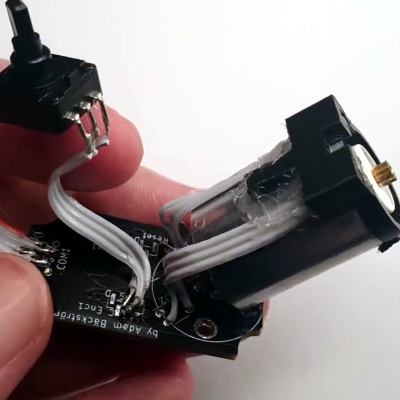

We’re not certain whether [Paul Gould]’s kid’s prosthetic elbow joint is intended for use by a real kid or is part of a robotics project — but it caught our eye for the way it packs the guts of a beefy-looking motorized joint into such a small space.

At its heart is a cycloidal gearbox, in which the three small shafts which drive the center gear are driven by a toothed belt. The motive power comes from a brushless motor, which is what gives the build that impressive small size. He’s posted a YouTube short showing its internals and it doing a small amount of weight lifting, so it evidently has some pulling power.

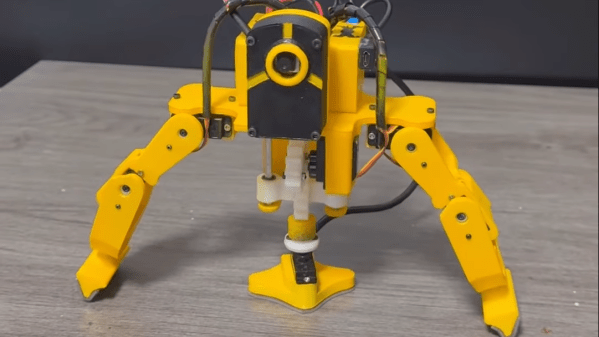

If you’re interested in working with this design, it can be downloaded for 3D printing from Thingiverse. We think it could find an application in plenty of other projects, and we’d be interested to see what people do with it. There’s certainly a comparison to be maid over robotic joints which use wires for actuation.