[Mellow_Labs] picked up a few LiDAR matrix sensors and found them very exciting. While a normal time-of-flight sensor can accurately determine a range, the matrix sensor is like an array of 64 sensors that can build a 2D map of distances from 2 cm to 3.5 m. [Mellow] wanted to add the sensor to his robot to help it see what was in front of it. You can see how it worked out in the video below.

The robot in question is Zippy, a 3D printed tank-like robot with an ESP32. By default, the robot requires control inputs, but using the sensor will enable autonomous operation. For good or ill, the sensor mounted to Zippy was seeing the floor with about half of the rows. That means about 50% of the data went to waste. However, we think having a robot be able to see the floor in front of it might be a good thing.

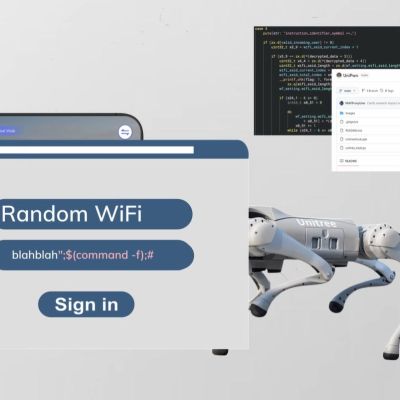

[Mellow] used an LLM to write most of the code, so there were a number of iterations required to get things working. This required decimating even more of the data from the sensor. Still, pretty impressive.

Want to learn more about ToF sensors? Or if you want to focus on the practical, there’s code you can borrow.