We all know that version one of a project is usually a stinker, at least in retrospect. Sure, it gets the basic idea into concrete form, but all it really does is set the stage for a version two. That’s better, but still not quite there. Version three is where the magic all comes together.

At least that’s how things transpired on [Shane Wighton]’s quest to build the perfect basketball robot. His first version was a passive backboard that redirected incoming shots based on its paraboloid shape. As cool as the math was that determined the board’s shape, it conspicuously lacked any complicated systems like motors and machine vision — you know, the fun stuff. Version two had all these elaborations and grabbed off-target shots a lot better, but still, it had a limited working envelope.

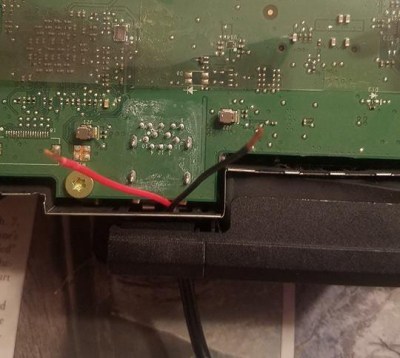

Enter version three, seen in action in the video below. Taking a page from [Mark Rober]’s playbook, [Shane] built a wickedly overengineered CoreXY-style robot to cover his shop wall. Everything was built with the lightest possible materials to keep inertia to a minimum and ensure the target ends up in the right place as quickly as possible. [Shane] even figured out how to mount the motor that tilts the backboard on the frame rather than to the carriage. A Kinect does depth-detection duty on the incoming ball — or the builder’s head — and drains pretty much every shot it can reach.

[Shane] has been doing some great work automating away the jobs of pro athletes. In addition to basketball, he has tackled both golf and baseball, bringing explosive power to each. We’re looking forward to versions two and three on both of those builds as well.

Continue reading “Third Time’s A Charm For This Basketball-Catching Robot”