It’s become a familiar theme over the last couple of decades — hardware is rendered useless when its manufacturer pulls the cloud service on which it depends. This is particularly annoying when the device is something which shouldn’t need a cloud service to run in the first place, and several manufacturers have found themselves in hot water because of this.

Somewhere in between is the Bose SoundTouch speaker system, which includes a set of six internet radio preset buttons. In early May the service behind them was shuttered, and now here’s [Tostmann] with an ESP32 firmware to bring them back.

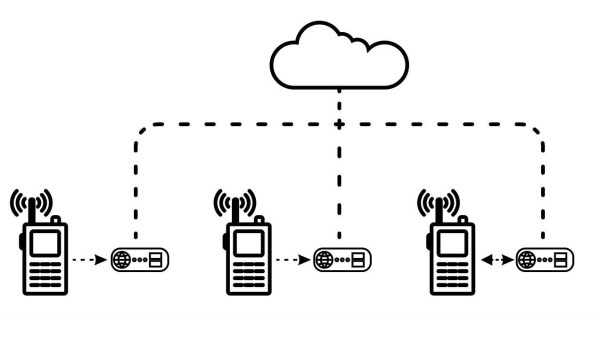

As you might imagine, it’s a device that emulates just enough of the now-defunct Bose cloud service to keep the speaker happy, but it has a clever trick up its sleeve. Normally these hacks rely on DNS redirects at the router, but this one avoids that thanks to a diagnostic interface on the Bose unit that allows the rewriting of the server address. The ESP32 does this with its own address, and the speaker is none the wiser.

We like this hack, because of its ingenuity, and because it saves yet another orphaned cloud product from becoming e-waste. This isn’t the first time we’ve seen a manufacturer on the naughty step for these practices.

Header image: TAKA@P.P.R.S, CC BY-SA 2.0.