You wouldn’t think that shaking something in just the right way would be the recipe for creating laser light, but as [Les Wright] explains in his new video, that’s pretty much how his DIY Raman laser works.

Of course, “shaking” is probably a gross oversimplification of Raman scattering, which lies at the heart of this laser. [Les] spends the first half of the video explaining Raman scattering and stimulated Raman scattering. It’s an excellent treatment of the subject matter, but at the end of the day, when certain crystals and liquids are pumped with a high-intensity laser they’ll emit coherent, monochromatic light at a lower frequency than the pumping laser. By carefully selecting the gain medium and the pumping laser wavelength, Raman lasers can emit almost any wavelength.

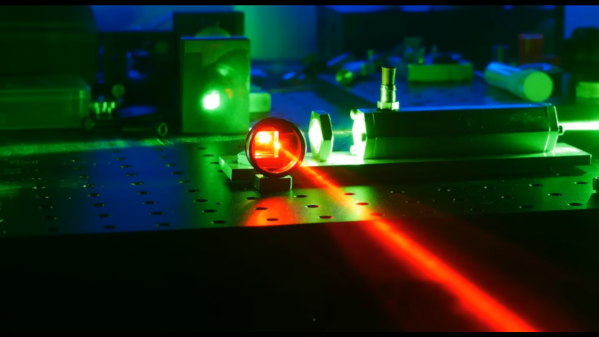

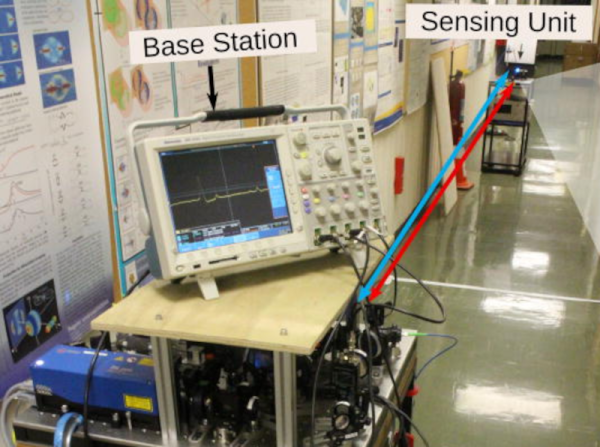

Most gain media for Raman lasers are somewhat exotic, but luckily some easily available materials will work just fine too. [Les] chose the common solvent dimethylsulfoxide (DMSO) for his laser, which was made from a length of aluminum hex stock. Bored out, capped with quartz windows, and fitted with a port to fill it with DMSO, the laser — or more correctly, a resonator — is placed in the path of [Les]’ high-power tattoo removal laser. Laser light at 532 nm from the pumping laser passes through a focusing lens into the DMSO where the stimulated Raman scattering takes place, and 628 nm light comes out. [Les] measured the wavelengths with his Raspberry Pi spectrometer, and found that the emitted wavelength was exactly as predicted by the Raman spectrum of DMSO.

It’s always a treat to see one of [Les]’ videos pop up in our feed; he’s got the coolest toys, and he not only knows what to do with them, but how to explain what’s going on with the physics. It’s a rare treat to watch a video and come away feeling smarter than when you started.

Continue reading “Homemade Raman Laser Is Shaken, Not Stirred”