[Tuco] is a cat who shares the space of [Micah Elizabeth Scott]. He is a large tabby tomcat, and he is polydactyl, which is to say he has a congenital excess of toes. He is an extremely active and engaging creature and enjoys playing and interacting with her. We covet [Tuco].

Sadly for the rest of us who love cats, of course, unless we know [Micah] personally we’ll never have the opportunity to play with [Tuco]. She appreciates the cat-shaped void that will leave in our lives, and to help us she’s building a telepresence robot to allow the rest of us to interact with him in real time.

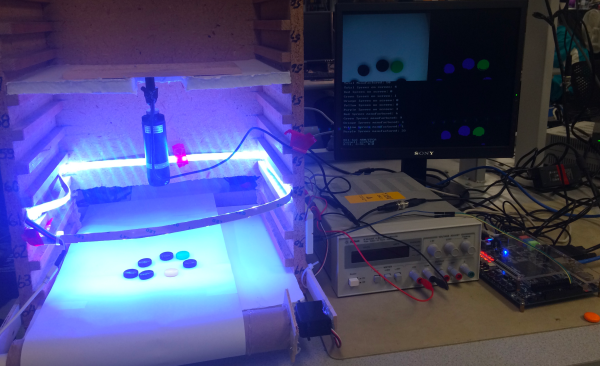

Her idea is to make a flying robot equipped with a camera on a gimbal, and because to mounting it on a multirotor platform would be a hazard, instead she’s making something closer to the aerial cameras you might be familiar with from sporting fixtures, a motorised platform suspended from the corners of her roof space on a set of nylon ropes, that can move at will by adjusting the length of each tether. It is suggested that one day the device will be able to launch plastic bolts for [Tuco] to chase and to incorporate other interactive features to allow online users to engage with him.

We are shown progress so far in the video introducing the project that we’ve placed below the break, she has completed a prototype windlass mechanism and worked on reverse engineering the gimbal mechanism for serial control. We’ll probably never meet [Tuco] in person, but we can’t wait to interact with him online.

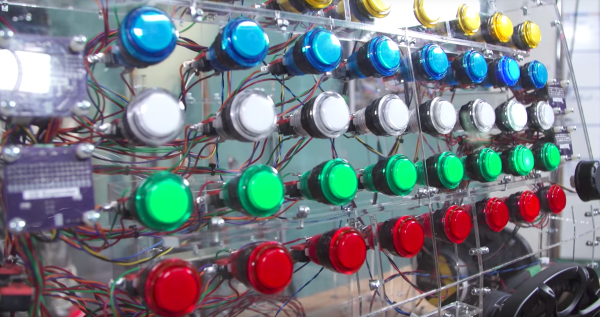

In order to control all of those buttons, the team designed

In order to control all of those buttons, the team designed