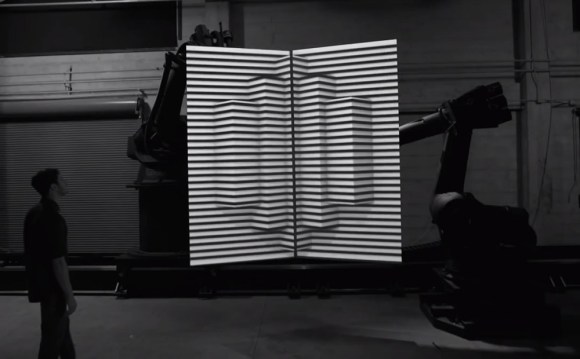

Take three industrial robots, two 4’ x 8’ canvases, and several powerful video projectors. Depending on who is doing the robot programming you may end up with a lot of broken glass and splinters, or you may end up with The Box. The latest video released by the creators project, The Box features industrial robots and projection mapping. We recently featured Disarm from the same channel.

The Box is one of those cases of taking multiple existing technologies and putting them together with breathtaking results. We can’t help but think of the possibilities of systems such as CastAR while watching the video. The robots move two large canvases while projectors display a series of 3D images on them. A third robot moves the camera.

In the behind the scenes video, the creators revealed that the robots are programmed using a Maya plugin. The plugin allowed them to synchronize the robot’s movements along with the animation. The entire video is a complex choreographed dance – even the position of the actor was pre-programmed into Maya.