It is absolutely no exaggeration to say that [Michael Steil] gave the Ultimate Game Boy talk at the 33rd Chaos Communication Congress back in 2016. Watch it, and if you think that there’s been a better talk since then, post up in the comments and we’ll give you the hour back. (As soon as we get this time machine working…)

We were looking into the audio subsystem of the Game Boy a while back, and scouring the Internet for resources, when we ran across this talk. Not only does [Michael] do a perfect job of demonstrating the entire audio system, allowing you to write custom chiptunes at the register level if that’s your thing, but he also gets deep into the graphics engine. You’ll never look at a low-bit Pole Position clone the same again. The talk even includes some new (in 2016, anyway) hacks on the pixel pipeline in the last 15 minutes, and a quick review of the hacking tools and even the Game Boy camera.

Why do you care about the Game Boy? It’s probably the last/best 8-bit game machine that was made in mass production. You can get your hands on one, or a clone, for dirt cheap. And if you build a microcontroller-based cartridge, you can hack the whole thing non-destructively live, and in Python! Or emulate the whole shebang. Either way, when you’re done, you’ve got a portable demo of your hard work thanks to the Nintendo hardware. It makes the perfect retro project.

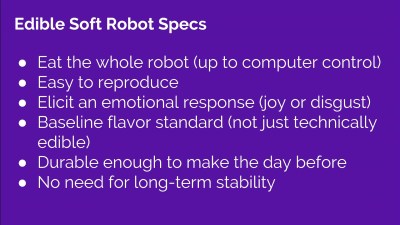

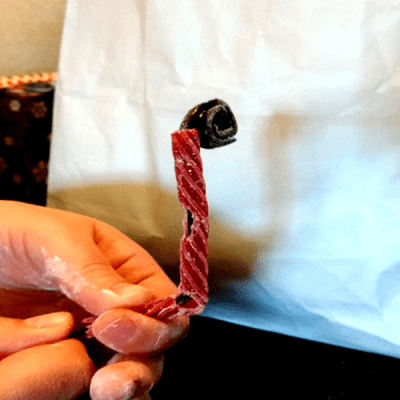

But more than that, she demonstrated all of the materials she’s looked at so far, and the research she’s done. To some extent, the process is the substance of this project, but there’s nothing wrong with some tasty revelations along the way.

But more than that, she demonstrated all of the materials she’s looked at so far, and the research she’s done. To some extent, the process is the substance of this project, but there’s nothing wrong with some tasty revelations along the way.