Those of us who enjoy seeing mechanical carnage have been blessed by the rise of video sharing services and high speed cameras. Oftentimes, these slow motion videos are heavy on destruction and light on science. However, this video from [Smarter Every Day] is worth watching, purely for the fluid mechanics at play when a supersonic baseball hits a 1-gallon jar of mayo.

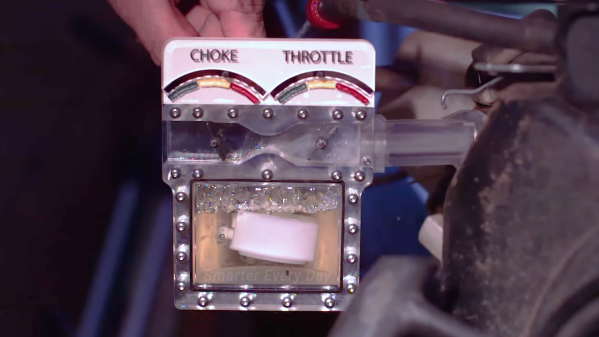

The experiment uses the baseball cannon that [Destin] of [Smarter Every Day] built last year. Ostensibly, the broader aim of the video is to characterize the baseball cannon’s performance. Shots are fired with varying pressures applied to the air tank and vacuum levels applied to the barrel, and the data charted.

However, the real glory starts 18:25 into the video, where a baseball is fired into the gigantic jar of mayo. The jar is vaporized in an instant from the sheer power of the collision, with the mayo becoming a potent-smelling aerosol in a flash.

Amazingly, the slow-motion camera reveals all manner of interesting phenomena. There’s a flash of flame as the ball hits the jar, suggesting compression ignition happened at impact with the jar’s label. A shadow from the shockwave ahead of the ball can be seen in the video, and particles in the cloud of mayo can be seen changing direction as the trailing shock catches up.

The slow-motion footage deserves to be shown in flow-visualization classes, not only because it’s awesome, but because it’s a great demonstration of supersonic flow phenomena. Video after the break.

Continue reading “Supersonic Baseball Hitting A Gallon Of Mayo Is Great Flow Visualization”