RealSense cameras have been a fascinating piece of tech from Intel — we’ve seen a number of cool applications in the hacker world, from robots to smart appliances. Unfortunately Intel did discontinue parts of the RealSense lineup at one point, specifically the LiDAR and face tracking-tailored models. Apparently, these haven’t been popular, and we haven’t seen these in hacks either. Until now, that is. [Lina] brings us a real-world application for the RealSense face tracking cameras, a FaceID application for Linux.

The project is as simple as it sounds: if the camera’s built-in face recognition module recognizes you, your lockscreen is unlocked. With the target being Linux, it has to tie into the Pluggable Authentication Modules (PAM) subsystem for authentication, and of course, there’s a PAM module for RealSense to go with it, aptly named pam_sauron. This module is written in Zig, a modern C-like language, so it’s both a good example of how to create your own PAM integrations, and a path towards doing that in a different language for once. As usual, there’s TODOs, like improving the UX and taking advantage of some security features RealSense cameras have, but it’s nevertheless a fun and self-sufficient application for one of the F4XX-series RealSense cameras in case you happen to own one.

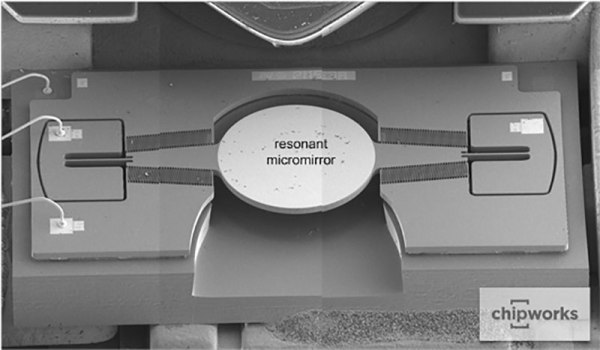

Ever since the introduction of RealSense we’ve seen these cameras used in robotics and 3D scanning, thanks at least in part due to their ability to be used in Linux. Thankfully, Intel only discontinued the less popular RealSense cameras, which didn’t affect the main RealSense lineup, and the hacker-beloved depth cameras are still available for all of our projects. Wondering about the tech behind it? Here’s a teardown of a RealSense camera module intended for laptop use.