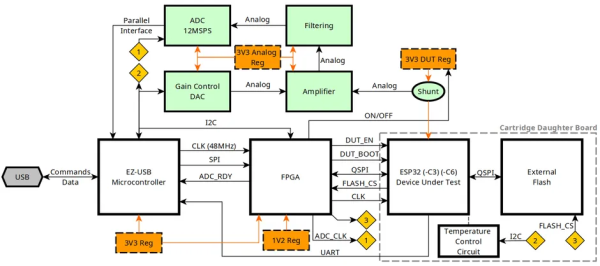

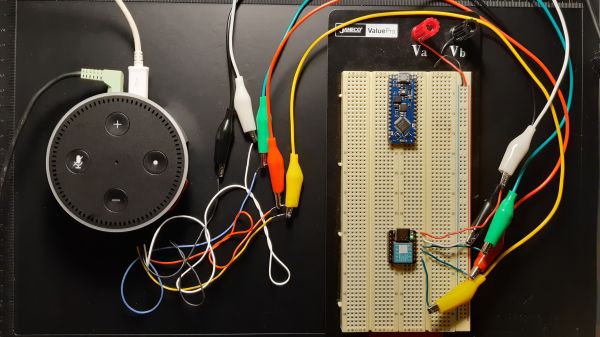

Espressif’s ESP32 microcontrollers come with a Flash encryption feature that when enabled ensures that the data and code stored on the (usually external) Flash chip is encrypted with AES-256 (ESP32) or better (ESP32-C3, -C6). For the ESP32 this encryption feature has been shown to be vulnerable to side channel attacks (SCA), leading [courk] to not only replicate this result with a custom ESP Correlation Power Analysis (CPA) board (pictured) that captures power usage of the MCU, but also to try his luck with the ESP32-C3 and ESP32-C6 parts that should be tougher nuts to crack.

Whereas the ESP32 uses a fairly straightforward AES-256 encryption routine that together with the exposed Flash communication lines on the QSPI bus make for a textbook SCA example, the ESP32-C3 ups the encryption to XTS-AES, which uses two 128-bit keys on the -C3 part (XTS-256). This particular MCU is still susceptible to the same SCA attack with CPA, making it somewhat harder to attack than the ESP32, but by no means impossible.

Continue reading “Breaking The Flash Encryption Feature Of Espressif’s Microcontrollers”