It’s been at least a month or two since the last vulnerability in Intel CPUs was released, but this time it’s serious. Foreshadow is the latest speculative execution attack that allows balaclava-wearing hackers to steal your sensitive information. You know it’s a real 0-day because it already has a domain, a logo, and this time, there’s a video explaining in simple terms anyone can understand why the sky is falling. The video uses ukuleles in the sound track, meaning it’s very well produced.

The Foreshadow attack relies on Intel’s Software Guard Extension (SGX) instructions that allow user code to allocate private regions of memory. These private regions of memory, or enclaves, were designed for VMs and DRM.

How Foreshadow Works

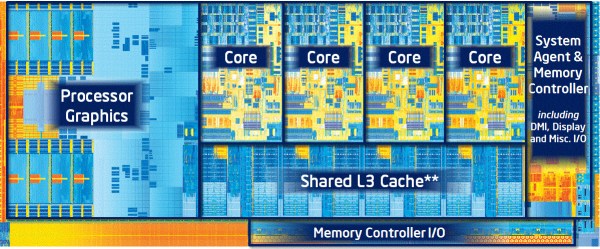

The Foreshadow attack utilizes speculative execution, a feature of modern CPUs most recently in the news thanks to the Meltdown and Spectre vulnerabilities. The Foreshadow attack reads the contents of memory protected by SGX, allowing an attacker to copy and read back private keys and other personal information. There is a second Foreshadow attack, called Foreshadow-NG, that is capable of reading anything inside a CPU’s L1 cache (effectively anything in memory with a little bit of work), and might also be used to read information stored in other virtual machines running on a third-party cloud. In the worst case scenario, running your own code on an AWS or Azure box could expose data that isn’t yours on the same AWS or Azure box. Additionally, countermeasures to Meltdown and Spectre attacks might be insufficient to protect from Foreshadown-NG

The researchers behind the Foreshadow attacks have talked with Intel, and the manufacturer has confirmed Foreshadow affects all SGX-enabled Skylake and Kaby Lake Core processors. Atom processors with SGX support remain unaffected. For the Foreshadow-NG attack, many more processors are affected, including second through eighth generation Core processors, and most Xeons. This is a significant percentage of all Intel CPUs currently deployed. Intel has released a security advisory detailing all the affected CPUs.