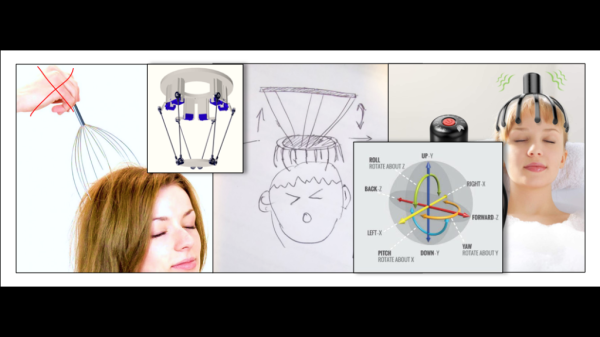

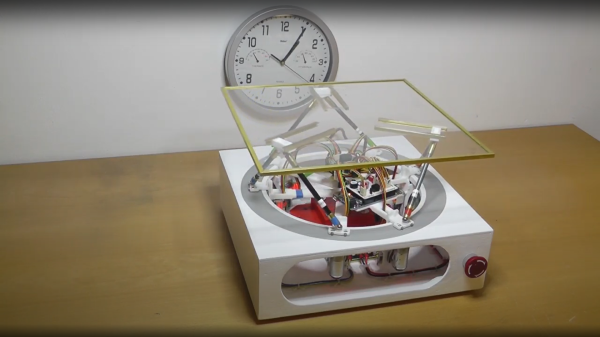

3D mice with six degrees of freedom (6DOF) motion are highly valued by professional CAD users. However, the entry-level versions typically cost upwards of $150 and are produced by a single manufacturer. [Colton Baldridge] has created the OS3M Mouse — an open source alternative using PCB coils and 3D printed flexures.

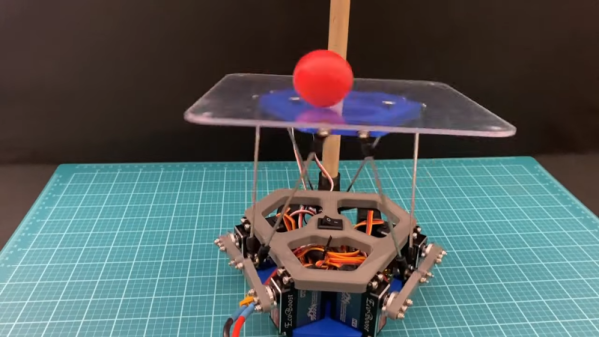

The primary challenges in creating a 6DOF input device, similar to the 3Dconnexion Space Mouse, lie in developing a mechanical coupling that enables full range motion, and electronics capable of precisely and consistently measuring this motion. After several iterations of printed flexure combinations and trip down the finite element analysis (FEA) rabbit hole, [Colton] had a working single-piece mechanical solution.

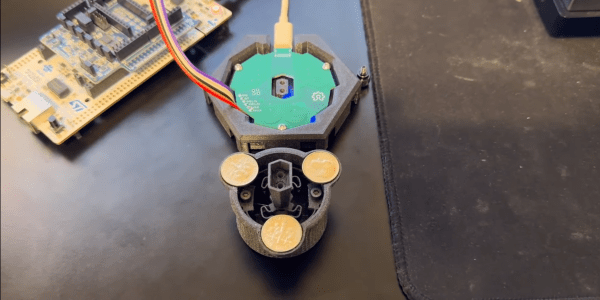

To measure the knob’s movement accurately, [Colton] employs inductive sensing. Inductance to Digital Converters (LDCs) assess the inductive alterations across three pairs of PCB coils, each having an opposing metal disk mounted on the knob. This setup allows [Colton] to use a Stewart platform‘s kinematic model calculate the knob’s relative position. The calculation are done on an STM32 which also acts USB HID send the position data to a computer. For the demo [Colton] created a simple C++ app to translate the position data to Solidworks API calls.

Continue reading “3D Mouse With 3D Printed Flexures And PCB Coils”

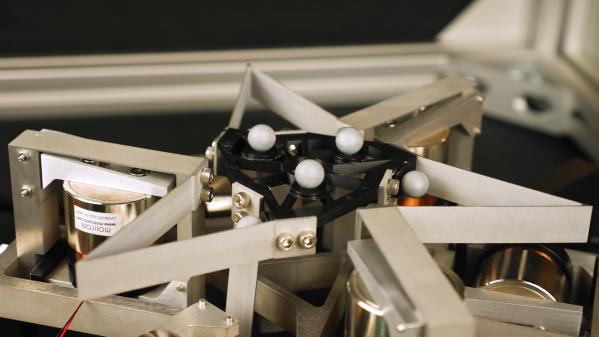

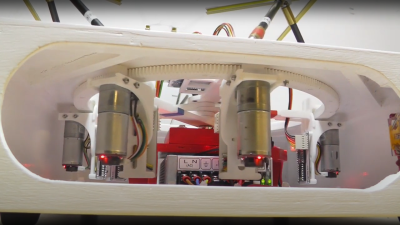

series of videos from a few years ago, showing the construction and operation of such a beast. This is a very neat mechanism comprised of six geared motors on the end of arms, engaging with a large internal gear. The common end of each arm rides on the central shaft, each with its own bearing. With the addition of the usual six linkages, twelve ball joints, and a few brackets, a complete platform is realised.

series of videos from a few years ago, showing the construction and operation of such a beast. This is a very neat mechanism comprised of six geared motors on the end of arms, engaging with a large internal gear. The common end of each arm rides on the central shaft, each with its own bearing. With the addition of the usual six linkages, twelve ball joints, and a few brackets, a complete platform is realised.