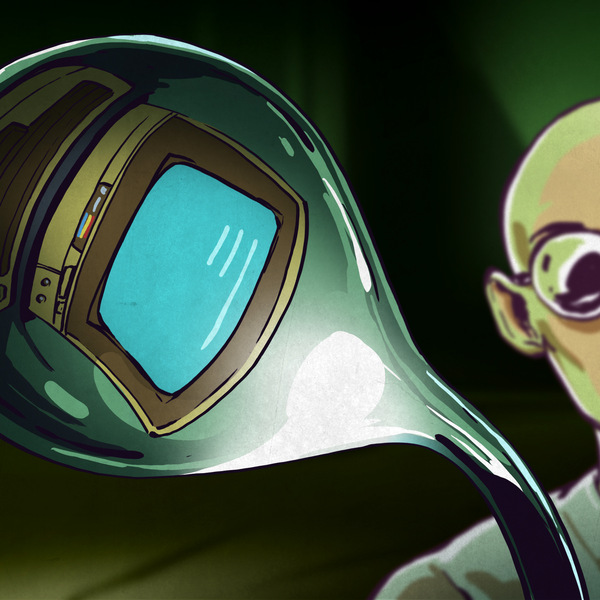

[XYZAiden]’s concept for a flexible robotic gripper might be a few years old, but if anything it’s even more accessible now than when he first prototyped it. It uses only a single motor and requires no complex mechanical assembly, and nowadays 3D printing with flexible filament has only gotten easier and more reliable.

The four-armed gripper you see here prints as a single piece, and is cable-driven with a single metal-geared servo powering the assembly. Each arm has a nylon string threaded through it so when the servo turns, it pulls each string which in turn makes each arm curl inward, closing the grip. Because of the way the gripper is made, releasing only requires relaxing the cables; an arm’s natural state is to fall open.

The main downside is that the servo and cables are working at a mechanical disadvantage, so the grip won’t be particularly strong. But for lightweight, irregular objects, this could be a feature rather than a bug.

The biggest advantage is that it’s extremely low-cost, and simple to both build and use. If one has access to a 3D printer and can make a servo rotate, raiding a junk bin could probably yield everything else.

DIY robotic gripper designs come in all sorts of variations. For example, this “jamming” bean-bag style gripper does an amazing, high-strength job of latching onto irregular objects without squashing them in the process. And here’s one built around grippy measuring tape, capable of surprising dexterity.

Continue reading “Print-in-Place Gripper Does It With A Single Motor” →