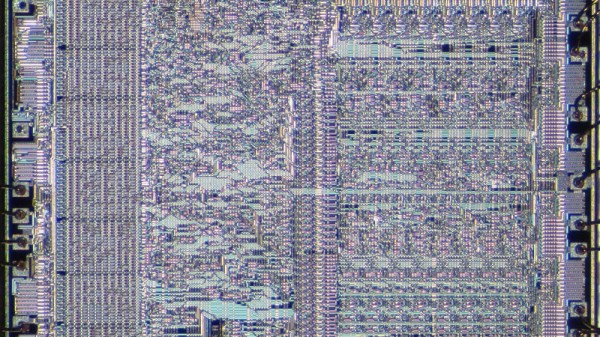

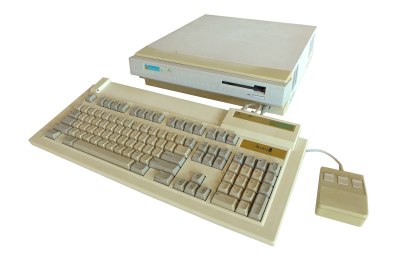

As I’m sure many of you know, x86 architecture has been around for quite some time. It has its roots in Intel’s early 8086 processor, the first in the family. Indeed, even the original 8086 inherits a small amount of architectural structure from Intel’s 8-bit predecessors, dating all the way back to the 8008. But the 8086 evolved into the 186, 286, 386, 486, and then they got names: Pentium would have been the 586.

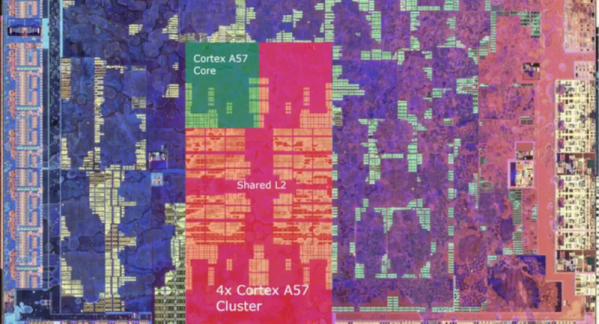

Along the way, new instructions were added, but the core of the x86 instruction set was retained. And a lot of effort was spent making the same instructions faster and faster. This has become so extreme that, even though the 8086 and modern Xeon processors can both run a common subset of code, the two CPUs architecturally look about as far apart as they possibly could.

So here we are today, with even the highest-end x86 CPUs still supporting the archaic 8086 real mode, where the CPU can address memory directly, without any redirection. Having this level of backwards compatibility can cause problems, especially with respect to multitasking and memory protection, but it was a feature of previous chips, so it’s a feature of current x86 designs. And there’s more!

I think it’s time to put a lot of the legacy of the 8086 to rest, and let the modern processors run free. Continue reading “Why X86 Needs To Die”