In the last few decades, building engineers and architects have made tremendous strides in improving the efficiency of various buildings and the devices that keep them safe and comfortable to live in. The addition of new technology like heat pumps is a major factor, as well as improvements on existing things like insulation methods and building materials. But after the low-hanging fruit is picked, technology like this smart occupancy sensor created by [Sina Moshksar] might be necessary to help drive further efficiency gains.

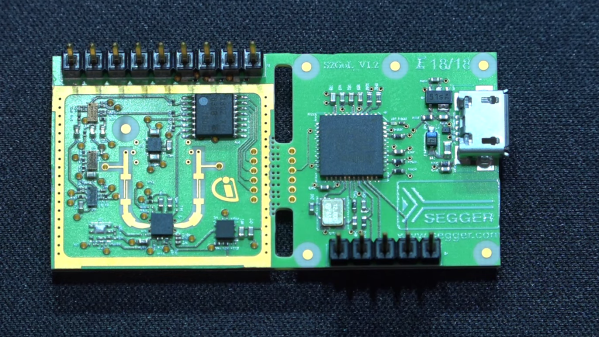

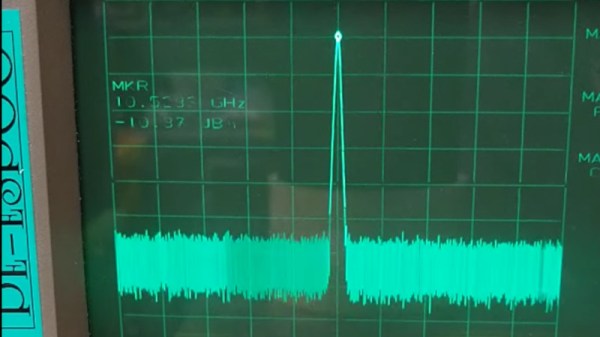

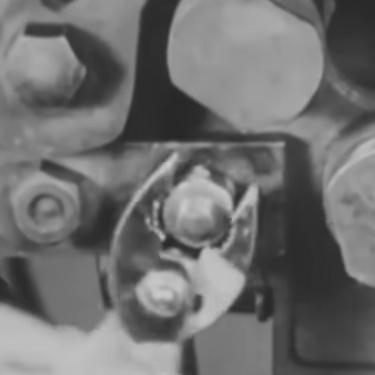

Known as RoomSense IQ, the small device mounts somewhere within a small room and uses a number of different technologies to keep track of the number of occupants in a room. The primary method is mmWave radar which can sense the presence of a person up to five meters away, but it also includes a PIR sensor to help prevent false positives and distinguish human activity from non-human activity. The device integrates with home automation systems to feed them occupancy data to use to further improve the performance of those types of systems. It’s also designed to be low-cost and easy to install, so it should be relatively straightforward to add a few to any existing system as well.

The project is also documented on this GitHub page, for anyone looking to build a little more data into their home automation system or even augment their home security systems. We imagine that devices like this could be used with great effect paired with a heating device like this, and we’ve also seen some other interesting methods of determining occupancy as well.