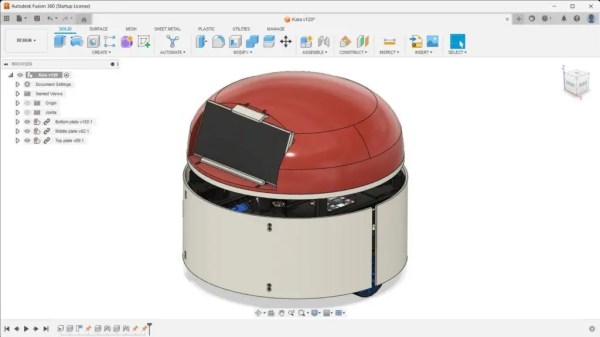

Ever heard about the Robot Operating System? It’s a BSD-licensed open-source system for controlling robots, from a variety of hardware. Over the years we’ve shared quite a few projects that run ROS, but nothing on how to actually use ROS. Lucky for us, a robotics company called Clearpath Robotics — who use ROS for everything — have decided to graciously share some tips and tricks on how to get started with ROS 101: An Introduction to the Robot Operating System.

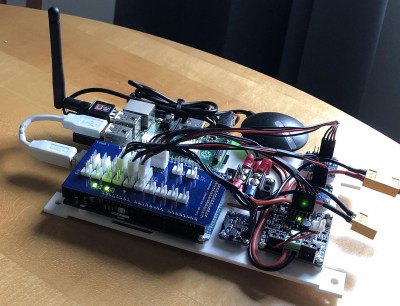

The beauty of the ROS system is that it is made up of a series of independent nodes which communicate with each other using a publish/subscribe messaging model. This means the hardware doesn’t matter. You can use different computers, even different architectures. The example [Ilia Baranov] gives is using an Arduino to publish the messages, a laptop subscribed to them, and even an Android phone used to drive the motors — talk about flexibility!

It appears they will be doing a whole series of these 101 posts, so check it out — they’ve already released numéro 2, ROS 101: A Practical Example. It even includes a ready to go Ubuntu disc image with ROS pre-installed to mess around with on VMWare Player!

And to get you inspired for using ROS, check out this Android controlled robot using it! Or how about a ridiculous wheel-chair-turned-creepy-face-tracking-robot?