Control panels of a pre-digitalization nuclear plant look quite daunting, with countless dials, buttons and switches that all make perfect sense to a trained operator, but seem as random as those of the original Enterprise bridge in Star Trek to the average person. This makes the reconstruction of part of the RBMK reactor control by the [Chornobyl Family] on YouTube a fun way to get comfortable with one of the most important elements of this type of reactor’s controls.

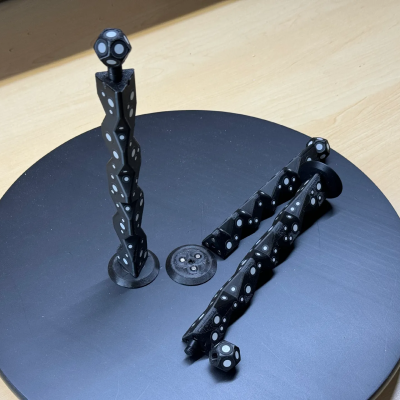

The section that is built here pertains to the control rods of the RBMK’s reactor, its automatic regulations and emergency systems like AZ-5 and BAZ. The goal is not just to have a shiny display piece that you can put on the wall, but to make it function just like the real control panel, and to use it for demonstrations of the underlying control systems. The creators spent a lot of time talking with operators of the Chornobyl Nuclear Plant – which operated until the early 2000s – to make the experience as accurate as possible.

Although no real RBMK reactor is being controlled by the panel, its ESP32-powered logic make it work like the real deal, and even uses a dot-matrix printer to provide logging of commands. Not only is this a pretty cool simulator, it’s also just the first element of what will be a larger recreation of an RBMK control room, with more videos in this series to follow.

Also covered in this video are the changes made after the Chernobyl Nuclear Plant’s #4 accident, which served to make RBMKs significantly safer, albeit at the cost of more complexity on the control panel.

Continue reading “Making A Functional Control Panel Of The Chernobyl RBMK Reactor”