We often take for granted how easy it is to get information in today’s modern, Internet-connected world. Especially around electronics projects, datasheets are generally a few clicks away, as are instructions for building almost anything. Not so in the late 80s where ordering physical catalogs of chips and their datasheets was generally required.

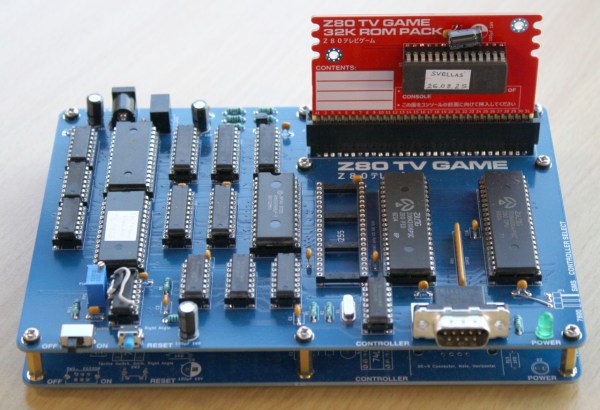

Mastering this landscape took a different skillset and far more determination than today, which is what makes the fact that a Japanese electronics hobbyist built a complete homebrew video game system from scratch in 1987 all the more impressive.[Alex] recently discovered this project and produced a replica of it with a few modern touches.

Continue reading “Recreating A Homebrew Game System From 1987”