Pomodoro timers are a simple productivity tool. They help you work in dedicated chunks of time, usually 25 minutes in a sitting, before taking a short break and then beginning again. [Clovis Fritzen] built just such a timer of his own, and added a few bonus features to fill out its functionality.

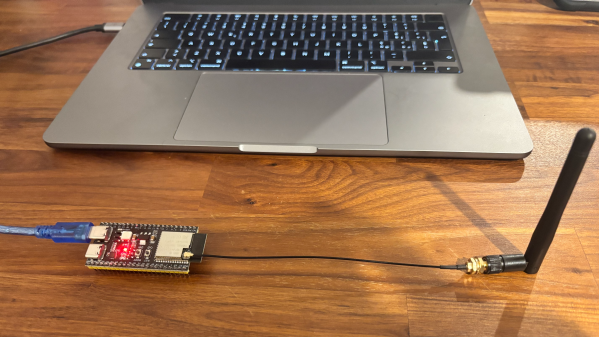

The timer is based around the popular ESP32-S2 microcontroller, which has the benefit of onboard WiFi connectivity. This allows the project to query the Internet for things like time and date updates via NTP, as well as weather conditions, and the value of the Brazilian Real versus the American dollar. The microcontroller is paired with an SHT21 sensor for displaying temperature and humidity in the immediate environment, and an e-paper display for showing timer status and other relevant information. A button on top of the device allows cycling between 15, 30, 45, and 60 minute Pomodoro cycles, and there’s a buzzer to audibly call time. It’s all wrapped up in a cardboard housing that somehow pairs rather nicely with the e-paper display aesthetic.

If Pomodoro is your chosen method of productivity hacking, a project like this could suit you very well. We’ve featured a few similar builds before, too. Continue reading “A Simple Desktop Pomodoro Timer”