If you’re looking to manipulate video, FFmpeg is one of the most powerful tools out there. But with this power comes a considerable degree of complexity, and a learning curve that looks suspiciously like a brick wall. To try and make this incredible tool a bit less obtuse, [Sam Lavigne] has developed a web interface that lets you play around with FFmpeg’s vast collection of audio and video filters.

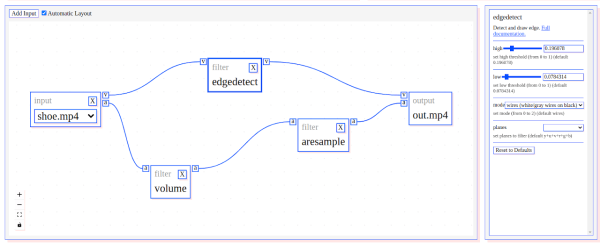

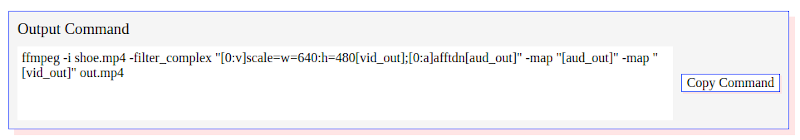

To try out a filter, you just need to select one from the window on the left and it will pop up in the central workspace. Here, the input, output, and any enabled filters will show up as boxes that can be virtually “wired” together. Selecting a filter will populate its options on the right hand side, with sliders and input boxes that allow you to play around with their parameters. When you want to see the final result, just click “Render Preview” and wait a bit.

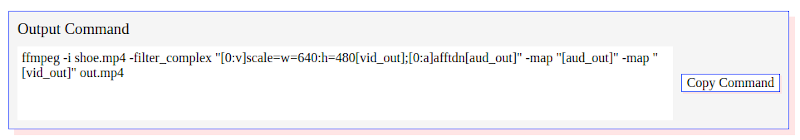

If there was any downside, it seems like whatever box the site is running on the overhead of running in the browser doesn’t provide it a lot of horsepower. Even with the relatively low resolution of the demo videos available, the console output at the top of the page shows FFmpeg sometimes flirts with a processing speed measured in single-digit frames per second. Still, for a filter playground, it gets the job done. Perhaps the best part of the whole tool is that you can then copy your properly formatted command right out of the browser window and into your terminal so you can put it to work on your local files.

FFmpeg is one of those programs you should really be familiar with because it often proves useful in unexpected ways. The ability to manipulate audio and video with just a few keystrokes can really come in handy, and we’ve seen this open-source tool used for everything from compressing podcasts onto floppy disks to overlaying real-time environmental data onto a video stream.