Lacking a DVD drive, [jg] was watching a TV series in the form of a bunch of .avi video files. Of course, when every episode contains a full intro, it is only a matter of time before that gets too annoying to sit through.

The usual method of skipping the intro on a plain video file is a simple one:

- Manually drag the playback forward past the intro.

- Oops that’s too far, bring it back.

- Ugh reversed it too much, nudge it forward.

- Okay, that’s good.

[jg] was certain there was a better way, and the solution was using audio fingerprinting to insert chapter breaks. The plain video files now have a chapter breaks around the intro, allowing for easy skipping straight to content. The reason behind selecting this method is simple: the show intro is always 52 seconds long, but it isn’t always in the same place. The intro plays somewhere within the first two to five minutes of an episode, so just skipping to a specific timestamp won’t do the trick.

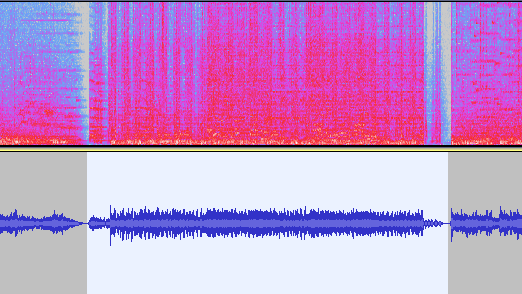

The first job is to extract the audio of an intro sequence, so that it can be used for fingerprinting. Exporting the first 15 minutes of audio with ffmpeg easily creates a wav file that can be trimmed down with an audio editor of choice. That clip gets fed into the open-source SoundFingerprinting library as a signature, then each video has its audio track exported and the signature gets identified within it. SoundFingerprinting therefore detects where (down to the second) the intro exists within each video file.

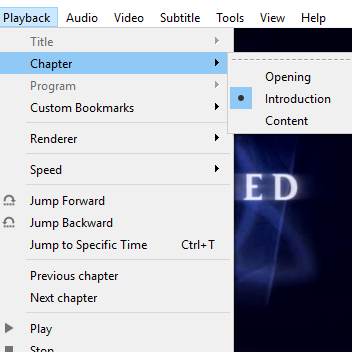

Marking out chapter breaks using that information is conceptually simple, but ends up being a bit roundabout because it seems .avi files don’t have a simple way to encode chapters. However, .mkv files are another matter. To get around this, [jg] first converts each .avi to .mkv using ffmpeg then splices in the chapter breaks with mkvmerge. One important element is that the reformatting between .avi and .mkv is done without completely re-encoding the video itself, so it’s a quick process. The result is a bunch of .mkv files with chapter breaks around the intro, wherever it may be!

The script is available here for anyone to play with, and the project page is a good learning reference because [jg] kindly provides all the command-line options used for each tool. Interested in using audio fingerprinting in your own projects? Remember to also check out Olaf, the Overly Lightweight Acoustic Fingerprinting method that can be implemented in embedded systems and web browsers.