You probably get a few of these things each week in the mail. And some of them actually do a good job of obscuring the contents inside, even if you hold the envelope up to the light. But have you ever taken the time to appreciate the beauty of security envelope patterns? Yeah, I didn’t think so.

The really interesting thing is just how many different patterns are out there when a dozen or so would probably cover it. But there are so, so many patterns in the world. In my experience, many utilities and higher-end companies create their own security patterns for mailing out statements and the like, so that right there adds up to some unknown abundance.

So, what did people do before security envelopes? When exactly did they come along? And how many patterns are out there? Let’s take a look beneath the flap.

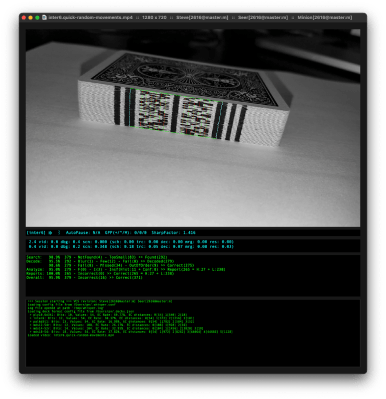

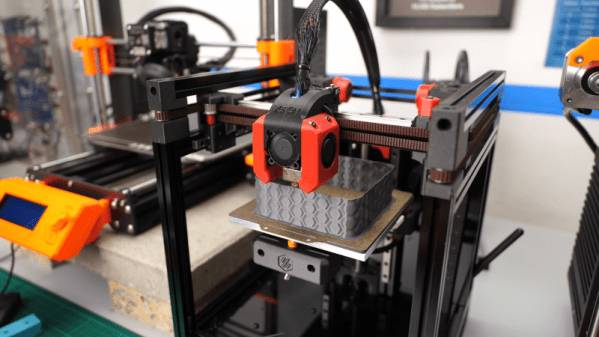

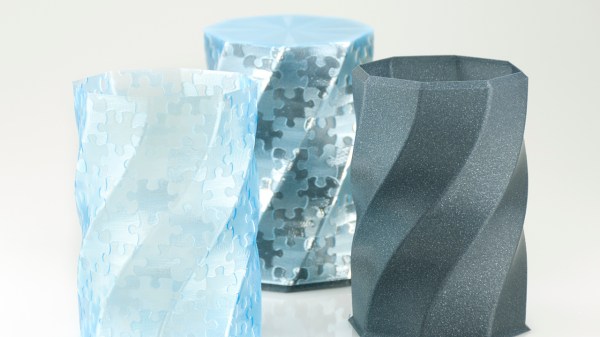

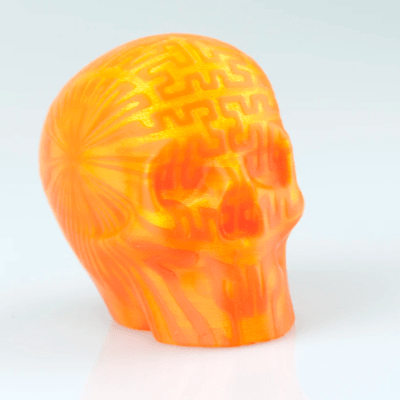

At its core, the technique is straightforward: skin an image onto a 3D print by varying the print speed in specific locations and, thereby, varying just how much plastic oozes out of the nozzle. While the concept seems simple, the result is stunning.

At its core, the technique is straightforward: skin an image onto a 3D print by varying the print speed in specific locations and, thereby, varying just how much plastic oozes out of the nozzle. While the concept seems simple, the result is stunning.