Ubuntu just came out with the new long-term support version of their desktop Linux operating system. It’s got a few newish features, including incorporating the “snap” package management format. One of the claims about “snaps” is that they’re more secure — being installed read-only and essentially self-contained makes them harder to hack across applications. In principle.

[mjg59] took issue with their claims of increased cross-application security. And rather than just moan, he patched together an exploit that’s disguised as a lovable teddy bear. The central flaw is something like twenty years old now; X11 has no sense of permissions and any X11 application can listen in on the keyboard and mouse at any time, regardless of which application the user thinks they’re providing input to. This makes writing keylogging and command-insertion trojans effortless, which is just what [mjg59] did. You can download a harmless version of the demo at [mjg59]’s GitHub.

This flaw in X11 is well-known. In some sense, there’s nothing new here. It’s only in light of Ubuntu’s claim of cross-application security that it’s interesting to bring this up again.

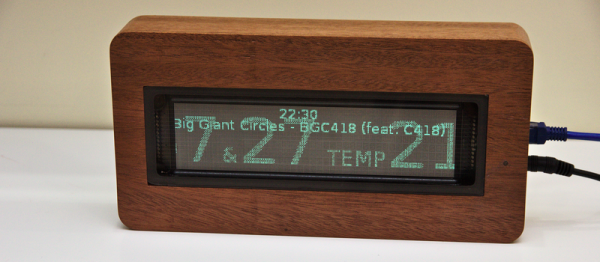

And the teddy bear in question? Xteddy dates back from when it was cool to display a static image in a window on a workstation computer. It’s like a warmer, cuddlier version of Xeyes. Except it just sits there. Or, in [mjg59]’s version, it records your keystrokes and uploads your passwords to shady underground characters or TLAs.

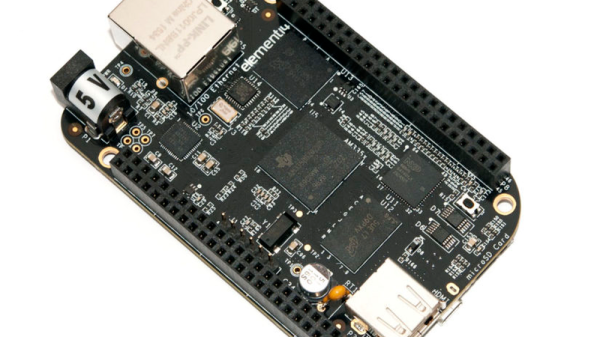

We discussed Snappy Core for IoT devices previously, and we think it’s a step in the right direction towards building a system where all the moving parts are only loosely connected to each other, which makes upgrading part of your system possible without upgrading (or downgrading) the whole thing. It probably does enhance security when coupled with a newer display manager like Mir or Wayland. But as [mjg59] pointed out, “snaps” alone don’t patch up X11’s security holes.