Some hacks are born of genius or necessity, and others from our sheer ham-fisted incompetence. This is not a story about the first kind. But it did give me an excuse to show how easy it is to design WiFi-connected devices that work the way you want them to, rather than the way the manufacturer had in mind.

It started out as a sensible idea – consumer electronics in Vietnam have many different electric plug types for mains AC power: A, C, G, F, and I are fairly present, with A and C being most common. For a quick review of what all those look like, this website sums it up nicely. There are universal power adapters available of course, but they tend to fit my most common type (C) poorly, resulting in intermittent power loss whenever you sneeze. So I figured I should replace all the plugs on my devices to be A-type (common to those of you in North America), as it holds well in all the power bar types I have, mainly leftover server PDUs.

This was very straightforward until I got to my desk lamp. Being a fancy Xiaomi smart lamp, they had opted to hide a transformer in the plug with such small dimensions that I failed to notice it. So instead of receiving a balmy 12 volts DC, it received 220 volts AC. With a bright flash and bang, it illuminated my desk one final time.

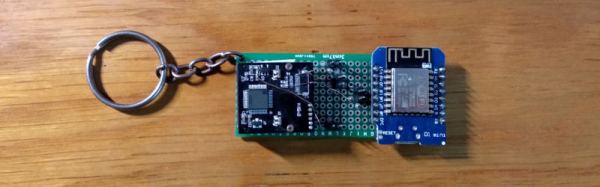

Continue reading “Fried Desk Lamp Reborn: How To Use ESP8266 To Build Connected Devices”