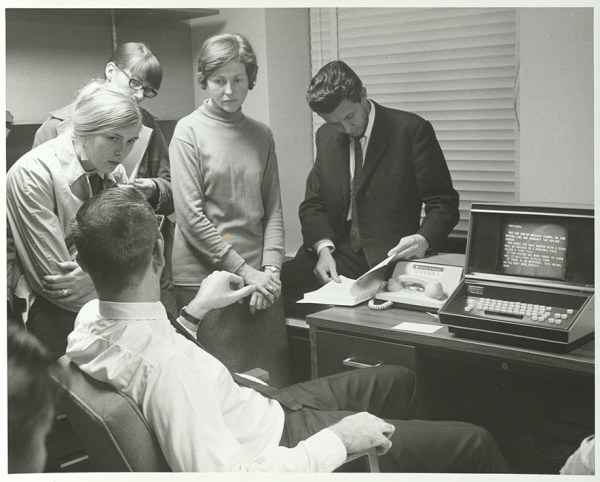

HP calculators, slide rules, and Forth all have something in common: reverse polish notation or RPN. Admittedly, slide rules don’t really have RPN, but you work problems on them the same way you do with an RPN calculator. For whatever reason, RPN didn’t really succeed in the general marketplace, and you might wonder why it was ever a thing. The biggest reason is that RPN is very easy to implement compared to working through proper algebraic, or infix, notation. In addition, in the early years of computers and calculators, you didn’t have much to work with, and people were used to using slide rules, so having something that didn’t take a lot of code that matched how users worked anyway was a win-win.

What is RPN?

If you haven’t encountered RPN before, it is an easy way to express math without ambiguity. For example, what’s 5 + 3 * 6? It’s 23 and not 48. By order of operations you know that you have to multiply before you add, even if you wrote down the multiplication second. You have to read through the whole equation before you can get started with math, and if you want to force the other result, you’ll need parentheses.

With RPN, there is no ambiguity depending on secret rules or parentheses, nor is there any reason to remember things unnecessarily. For instance, to calculate our example you have to read all the way through once to figure out that you have to multiply first, then you need to remember that is pending and add the 5. With RPN, you go left to right, and every time you see an operator, you act on it and move on. With RPN, you would write 3 6 * 5 +.

While HP calculators were the most common place to encounter RPN, it wasn’t the only place. Friden calculators had it, too. Some early computers and calculators supported it but didn’t name it. Some Soviet-era calculators used it, too, including the famous Elektronika B3-34, which was featured in a science fiction story in a Soviet magazine aimed at young people in 1985. The story set problems that had to be worked on the calculator.

Continue reading “In Praise Of RPN (with Python Or C)” →