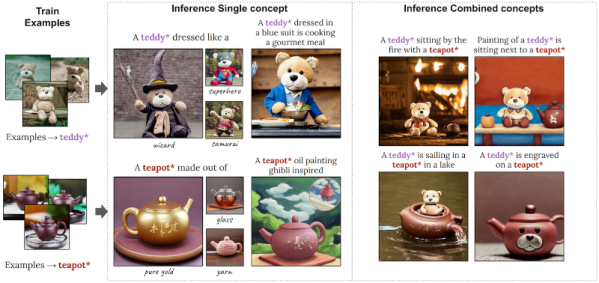

Dan Maloney wanted to design a part for 3D printing. OpenSCAD is a coding language for generating 3D objects. ChatGPT can write code. What could possibly go wrong? You should go read his article because it’s enlightening and hilarious, but the punchline is that it ran afoul of syntax errors, but also gave him enough of a foothold that he could teach himself enough OpenSCAD to get the project done anyway. As with many people who have asked the AI to create some code, Dan finds that it’s not as good as asking someone who knows what they’re doing, but that it’s also better than nothing.

And this is where I start grumbling. When you type your desires into the word-follower machine, your alternative isn’t nothing. Your alternative is to fire up a search engine instead and type “openscad tutorial”. That, for nearly any human endeavor, will get you a few good guides, written by humans who are probably expert in the subject in question, and which are aimed at teaching you the thing that you want to learn. It doesn’t get better than that. You’ll be up and running with your design in no time.

Indeed, if you think about the relevant source material that the LLM was trained on, it’s exactly these tutorials. It can’t possibly do better than the best of them, although the resulting average tutorial might be better than the worst you’ll find. (Some have speculated on what happens when the entire Internet is filled with these generated texts – what will future AIs learn from?)

Indeed, if you think about the relevant source material that the LLM was trained on, it’s exactly these tutorials. It can’t possibly do better than the best of them, although the resulting average tutorial might be better than the worst you’ll find. (Some have speculated on what happens when the entire Internet is filled with these generated texts – what will future AIs learn from?)

In Dan’s case, though, he didn’t necessarily want to learn OpenSCAD – he just wanted the latch designed. But in the end, he had to learn enough OpenSCAD to get the AI code compiling without error. He spent an hour learning OpenSCAD and now he’s good to go on his next project too.

So the next time you hear someone say that they got an answer back from a large language model that wasn’t perfect, but it was “better than nothing”, think critically if “nothing” is really the right benchmark.

Do you really want to learn nothing? Do you really have no resources to get started with? I would claim that we have the most amazing set of tutorial resources the world has ever known at our fingertips. Compared to the ability to teach millions of humans to achieve their own goals, that makes the LLM party tricks look kinda weak, in my opinion.