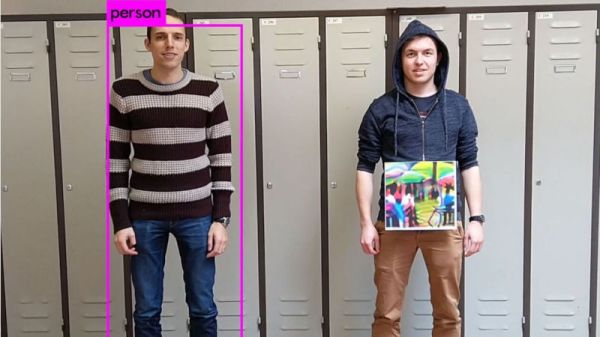

Ever since we first saw the nightmarish artwork produced by Google DeepDream and the ridiculous faux paintings produced from neural style transfer, we’ve been aware of the ways machine learning can be applied to visual art. With commercially available trained models and automated pipelines for generating images from relatively small training sets, it’s now possible for developers without theoretical knowledge of machine learning to easily generate images, provided they have sufficient access to GPUs. Filmmaker [Kira Bursky] took this a step further, creating a surreal short film that features characters and textures produced from image sets.

She began with about 150 photos of her face, 200 photos of film locations, 4600 photos of past film productions, and 100 drawings as the main datasets.

On the other hand, the results of training the film set photos on models of faces and bedrooms produced abstract textures and “surreal and eerie faces like a fever dream”. Perhaps, unlike the familiar anchors of facial features, it’s the lack of recognizable characteristics in the transformed images that gives them such a surreal feel.

[Kira] certainly uses these results to her advantage, brainstorming a concept for a short film that revolves around her main character experiencing nightmares. Although her objective was to use her results to convey a series of emotionally striking scenes, the models she uses to produce these scenes are also quite interesting.

She started off by using the MiDaS model, created by a team of researchers from ETH Zurich and Intel, for generating monocular depth maps. The results associated levels inside of an image with their appropriate depth in relation to one another. She also used the MASK R-CNN for masking out the backgrounds in generated faces and combined her generated images in Photoshop to create the main character for her short film.

Bringing the results together, she animated a 3D camera blur using the depth map videos to create a less disorienting result by providing anchor points for the viewers and creating a displacement map to heighten the sense of depth and movement within the scenes. In After Effects, she also overlaid dust and film grain effects to give the final result a crisper look. The result is a surprisingly cinematic film entirely made of images and videos generated from machine learning models. With the help of the depth adjustments, it almost looks like something that you might see in a nightmare.

Check out the result below:

Continue reading “Creating Surreal Short Films From Machine Learning”