Do you or a loved one suffer from distorted 3D prints? Does your laser cutter produce parallelograms instead of rectangles? If so, you might be suffering from CNC skew miscalibration, and you could be entitled to significant compensation for your pain and suffering. Or, in the reality-based world, you could simply fix the problem yourself with this machine-vision skew correction system and get back to work.

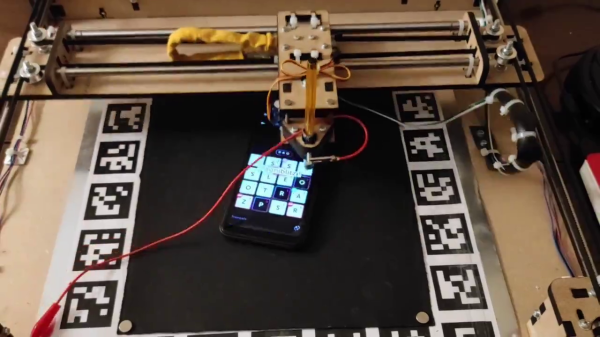

If you want to put [Marius Wachtler]’s solution to work for you, it’s probably best to review his earlier work on pressure-advance correction. The tool-mounted endoscopic camera he used in that project is key to this one, but rather than monitoring a test print for optimum pressure settings, he’s using it to detect minor differences in the X-Y feed rates, which can turn what’s supposed to be a 90-degree angle into something else.

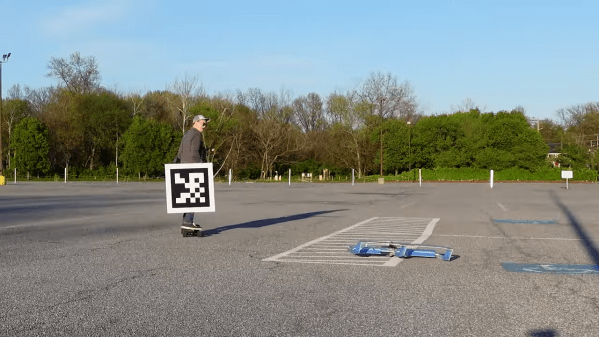

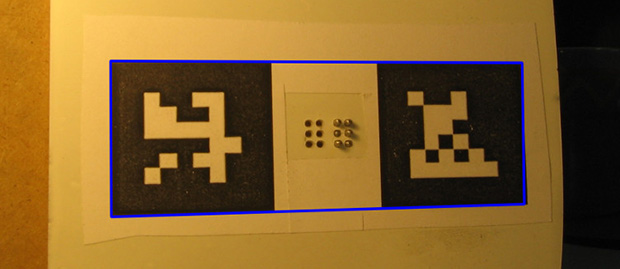

The key to detecting these problems is the so-called ChArUco board, which is a hybrid of a standard chess board pattern with ArUco markers added to the white squares. ArUco markers are a little like 2D barcodes in that they encode an identifier in an array of black and white pixels. [Marius] provides a PDF of a ChArUco that can be printed and pasted to a board, along with a skew correction program that analyzes the ChArUco pattern and produces Klipper commands to adjust for any skew detected in the X-Y plane. The video below goes over the basics.

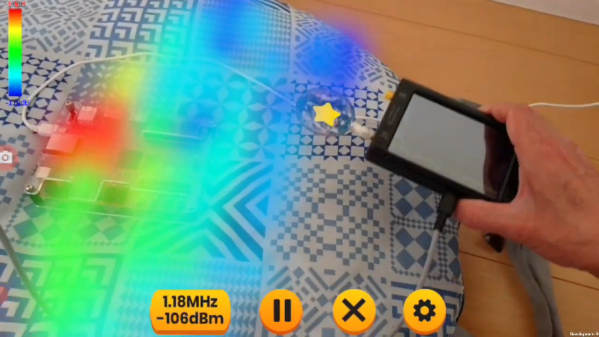

For as clever and useful as ChArUco patterns seem to be, we’re surprised we haven’t seen them used for more than this CNC toolpath visualization project (although we do see the occasional appearance of ArUco). We wonder what other applications there might be for these boards. OpenCV supports it, so let us know what you come up with.

Continue reading “Camera And ChArUco Keep The Skew Out Of Your 3D Prints”