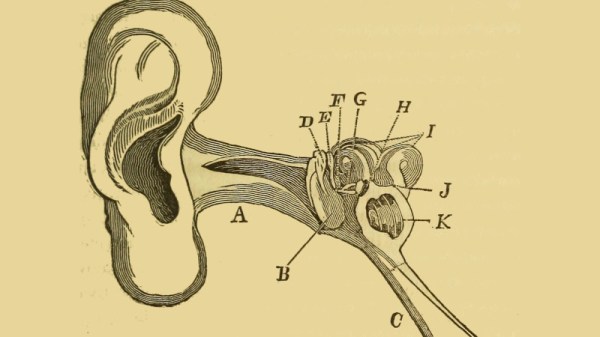

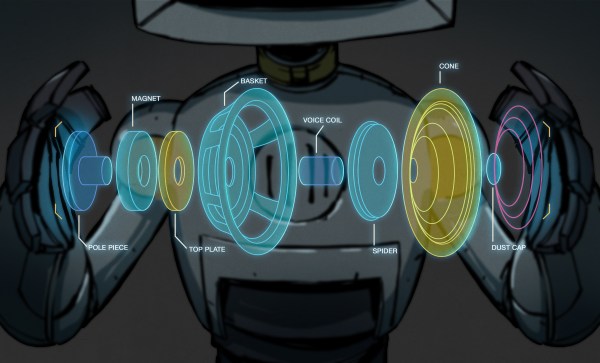

For as many speakers as someone can cram into a surround sound system, humans still (generally) only have two ears to listen to those sounds with. This means that, for recording purposes, it’s possible to create incredibly vivid three-dimensional sounds with just two microphones, provided that there’s an actual physical replica of a human ear attached to each microphone. This helps ensure that all the qualities of the sounds are preserved in a way a real human would experience them, and as [David Green] demonstrates, these systems don’t need to be very expensive.

This build doesn’t just use models of human ears for recording sounds through. The silicone ears are mounted on a styrofoam mannequin head as well, which provides some sound isolation between the two microphones, much like a real human head. The ears are mounted in appropriate locations with the microphones installed inside, and the entire microphone apparatus is positioned on a PVC rig with a camera so that binaural audio will be recorded for anything [David] points it at.

Although he had some issues interfacing two microphones using 19th-century technology instead of soldering everything together, the build still eventually came together, and only for around $70 USD. However, this build is a bit dated now, so prices may have changed by now. It’s still a great way to produce realistic stereo sound without breaking the bank, but it’s not the only way of getting this job done.