When there are so many single board computers and other products aimed at providing children with the means to learn about programming and other skills, it is easy to forget at time before the Arduino or the Raspberry Pi and their imitators, when a computer was very much an expensive closed box.

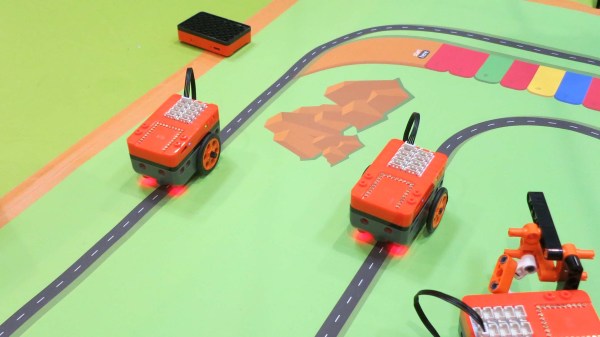

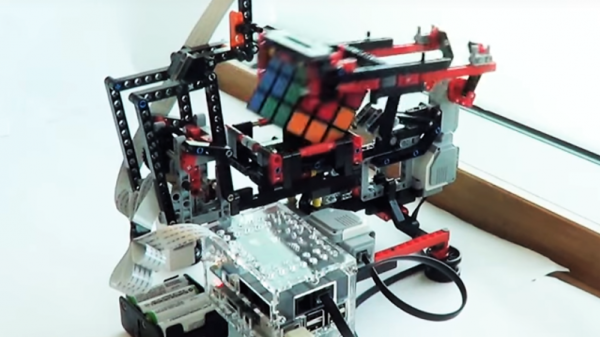

Into this late-’90s vacuum left in the wake of the 8-bit home computer revolution came LEGO’s Mindstorms kits, a box of interlocking goodies with a special programmable brick, which gave kids the chance to make free-form computerized robotic projects all of their own. The recent news that after 24 years the company will discontinue the Mindstorms range at the end of the year thus feels like the end of an era to anyone who has ridden the accessible microcontroller train since then.

What became Mindstorms has its roots in the MIT Media Lab’s Programmable Brick project, a series of chunky LEGO bricks with microcontrollers and the Mindstorms LEGO brick contacts for motors and sensors. Their Logo programming language implementation was eschewed by LEGO in favor of a graphical system on a host computer, and the Mindstorms kit was born. The brand has since been used on a series of iterations of the controller, and a range of different robotics kits.

In 1998, a home computer had morphed from something programmable in BASIC to a machine that ran Windows and Microsoft Office. Boards such as Parallax’s BASIC Stamp were available but expensive, and didn’t come with anything to control. The Mindstorms kit was revolutionary then in offering an accessible fully programmable microcontroller in a toy, along with a full set of LEGO including motors and sensors to use with it.

We’re guessing Mindstorms has been seen off by better and cheaper single board computers here in 2022, but that doesn’t take away its special place in providing ’90s kids with their first chance to make a proper robot their way. The kits have found their place here at Hackaday, but perhaps most of the projects we’ve featured using them being a few years old now underlines why they are to meet their end. So long Mindstorms, you won’t be forgotten!

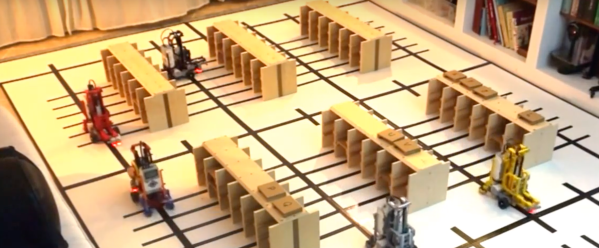

Header image: Mairi, (CC BY-SA 3.0).