The US National Highway Traffic Safety Administration (NHTSA) report on the May 2016 fatal accident in Florida involving a Tesla Model S in Autopilot mode just came out (PDF). The verdict? “the Automatic Emergency Braking (AEB) system did not provide any warning or automated braking for the collision event, and the driver took no braking, steering, or other actions to avoid the collision.” The accident was a result of the driver’s misuse of the technology.

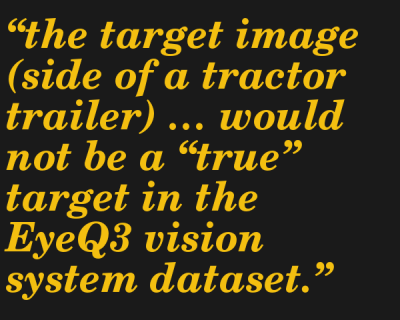

This places no blame on Tesla because the system was simply not designed to handle obstacles travelling at 90 degrees to the car. Because the truck that the Tesla plowed into was sideways to the car, “the target image (side of a tractor trailer) … would not be a “true” target in the EyeQ3 vision system dataset.” Other situations that are outside of the scope of the current state of technology include cut-ins, cut-outs, and crossing path collisions. In short, the Tesla helps prevent rear-end collisions with the car in front of it, but has limited side vision. The driver should have known this.

This places no blame on Tesla because the system was simply not designed to handle obstacles travelling at 90 degrees to the car. Because the truck that the Tesla plowed into was sideways to the car, “the target image (side of a tractor trailer) … would not be a “true” target in the EyeQ3 vision system dataset.” Other situations that are outside of the scope of the current state of technology include cut-ins, cut-outs, and crossing path collisions. In short, the Tesla helps prevent rear-end collisions with the car in front of it, but has limited side vision. The driver should have known this.

The NHTSA report concludes that “Advanced Driver Assistance Systems … require the continual and full attention of the driver to monitor the traffic environment and be prepared to take action to avoid crashes.” The report also mentions the recent (post-Florida) additions to Tesla’s Autopilot that help make sure that the driver is in the loop.

The takeaway is that humans are still responsible for their own safety, and that “Autopilot” is more like anti-lock brakes than it is like Skynet. Our favorite footnote, in carefully couched legalese: “NHTSA recognizes that other jurisdictions have raised concerns about Tesla’s use of the name “Autopilot”. This issue is outside the scope of this investigation.” (The banner image is from this German YouTube video where a Tesla rep in the back seat tells the reporter that he can take his hands off the wheel. There may be mixed signals here.)

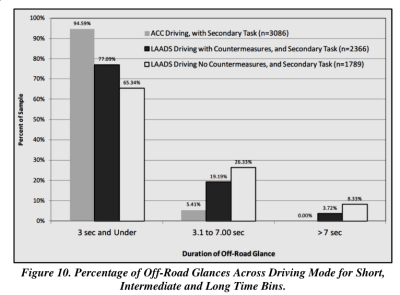

There are other details that make the report worth reading if, like us, you would like to see some more data about how self-driving cars actually perform on the road. On one hand, Tesla’s Autosteer function seems to have reduced the rate at which their cars got into crashes. On the other, increasing use of the driving assistance functions comes with an increase driver inattention for durations of three seconds or longer.

There are other details that make the report worth reading if, like us, you would like to see some more data about how self-driving cars actually perform on the road. On one hand, Tesla’s Autosteer function seems to have reduced the rate at which their cars got into crashes. On the other, increasing use of the driving assistance functions comes with an increase driver inattention for durations of three seconds or longer.

People simply think that the Autopilot should do more than it actually does. Per the report, this problem of “driver misuse in the context of semi-autonomous vehicles is an emerging issue.” Whether technology will improve fast enough to protect us from ourselves is an open question.

[via Popular Science].

The basic components behind the build are a current transformer, a NeoPixel LED strip, and an ATtiny44 to run the show. But the quality of the build is where [ch00f]’s project really shines. The writeup is top notch — [ch00f] goes to great lengths showing every detail of the build. The project log covers the challenges of finding appropriate wiring & enclosures for the high power AC build, how to interface the current-sense transformer to the microcontroller, and shares [ch00f]’s techniques for testing the fit of components to ensure the best chance of getting the build right the first time. If you’ve ever gotten a breadboarded prototype humming along sweetly, only to suffer as you try to cram all the pieces into a tiny plastic box, you’ll definitely pick something up here.

The basic components behind the build are a current transformer, a NeoPixel LED strip, and an ATtiny44 to run the show. But the quality of the build is where [ch00f]’s project really shines. The writeup is top notch — [ch00f] goes to great lengths showing every detail of the build. The project log covers the challenges of finding appropriate wiring & enclosures for the high power AC build, how to interface the current-sense transformer to the microcontroller, and shares [ch00f]’s techniques for testing the fit of components to ensure the best chance of getting the build right the first time. If you’ve ever gotten a breadboarded prototype humming along sweetly, only to suffer as you try to cram all the pieces into a tiny plastic box, you’ll definitely pick something up here.