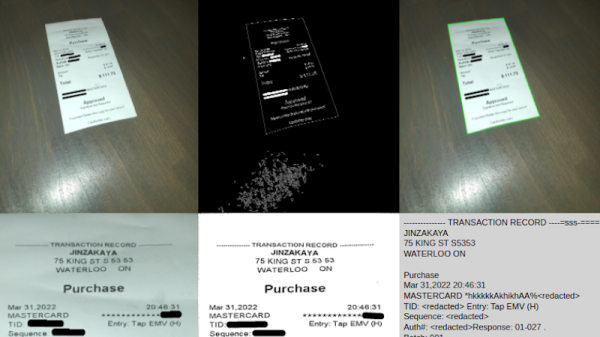

Running an optical character recognition (OCR) server might sound like it would need some powerful hardware, like a rack-mounted, water-cooled machine, or at least a nice desktop or laptop. But if you have the time, anything could be used. [Hemant] has a long-running personal project that processes a lot of image data over a long time, and set up the OCR server on an iPhone 8 running entirely with solar power, rather than turn to more typical hardware.

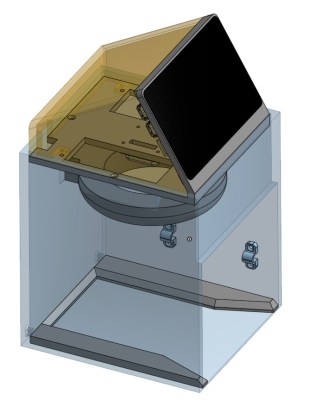

Part of what makes this task feasible for low-powered hardware is Apple’s Vision framework, which uses machine learning to aid in things like character recognition (among other tasks). It will run on an iPhone just as easily as a Mac. The phone’s built-in battery already provides the first step of an off-grid setup. This build relies on a separate power bank to integrate the phone with the solar panel more easily. On the software side, [Hemant] reports that the true challenge wasn’t setting up the server as much as it was keeping the iPhone from sleeping or stopping his program from running full-time.

A system like this running off-grid, especially considering the costs of the solar panel and power bank, might seem counterproductive. But when comparing electricity costs for running the same software on his server, he estimates he saves about $10 per month with this setup, which has a payback of somewhere around 2-3 years. Not too bad for a phone that would have otherwise ended up in a landfill. Old phones can be surprisingly good choices for servers, too. It helps if they can run Linux, but plenty of phones will support server applications, even when running their native OS.