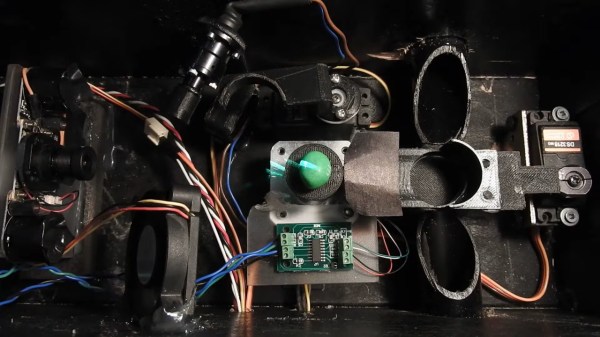

The huge diversity of sensors and other hardware which our community now has access to seems comprehensive, but there remain many parts which have made little impact due to cost or scarcity. It’s one of these which [Enginoor] has taken for the sensor in a 3D scanner, an industrial laser displacement sensor.

This sensor measures distance, but it’s not one of the time-of-flight sensors we’re familiar with. Instead it’s similar to a photographic rangefinder, relying on the parallax angle as seen from a sensor a distance apart from the laser. They are extremely expensive due to their high-precision construction, but happily they can be found at a more affordable level second-hand from decommissioned machinery.

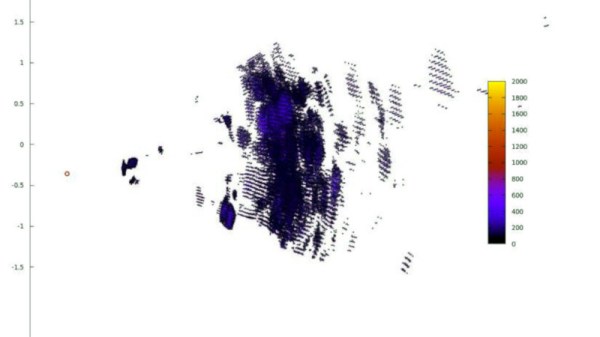

In this case the sensor is mounted on an X-Y gantry, and scans the part making individual point measurements. The sensor is interfaced to a Teensy, which in turn spits the data back to a PC for processing. By their own admission it’s not the most practical of builds, but for us that’s not the point. We hope that bringing these parts to the attention of our community might see them used in other ways.

We’ve featured huge numbers of 3D scanners over the years, including a look at how not to make one.