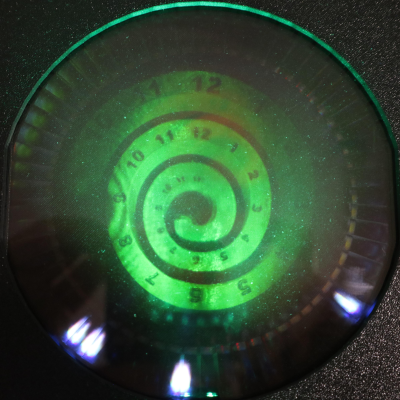

While working on a project that involved super-thin prints, [Julius Curt] came up with selective ironing, a way to put designs on the top surface of a print without adding any height.

For those unfamiliar, ironing is a technique in filament-based 3D printing that uses the extruder to smooth out top surfaces after printing them. The hot nozzle makes additional passes across a top surface, extruding a tiny amount in the process, which smooths out imperfections and leaves a much cleaner surface. Selective ironing is nearly the same process, but applied only in a certain pattern instead of across an entire surface.

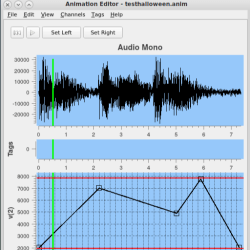

While conceptually simple, actually making it work was harder than expected. [Julius] settled on using a mixture of computer-aided design (CAD) work to define the pattern, combined with a post-processing script. More specifically, one models the desired pattern into the object in CAD as a one-layer-tall feature. The script then removes that layer from the model while applying the modified ironing pattern in its place. In this way, one can define the pattern in CAD without actually adding any height to the printed object. You can see it in action in the video, embedded below.

We’ve seen some interesting experiments in ironing 3D prints, including non-planar ironing and doing away with the ironing setting altogether by carefully tuning slicer settings so it is not needed. Selective Ironing is another creative angle, and we can imagine it being used to embed a logo or part number as easily as a pattern.

Selective Ironing is still experimental, but if you find yourself intrigued and would like to give it a try head over to the GitHub repository where you’ll find the script as well as examples to try out.

Continue reading “Selective Ironing Adds Designs To 3D Prints”