[Punxatawny Phil]’s prognostications aside, winter isn’t over up here in the Northern Hemisphere, and the snow keeps falling. If you’re sick of shoveling the driveway and the walk and you don’t have a kid handy to rope into the job, relax — this rapidly assembled junkyard RC snowblower will do just as crappy a job while you stay nice and warm inside.

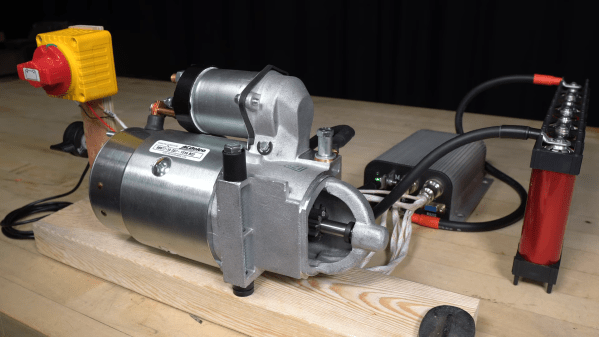

This build seemed to have a lot of potential at the start, based as it was on a second-hand track-drive snowblower, something that was presumably purpose-built for the job at hand. [Lucas] quickly got to work on it; he left the original gasoline engine to power the auger but took most of the transmission off so that each track could be driven separately with a wheelchair motor. That seemed like a solid idea as far as steering goes, but the fact that he chose to drive the 24 volt motors with a single 12 volt deep-cycle battery worked against him out in the snow.

With a battery upgrade for better traction, the snowblower actually got around in the snow pretty well. [Lucas] also added some nice features, like a linear actuator to remotely engage the auger — a nice safety touch when kids and pets are around — and a motor to control the direction of the chute. Even these improvements weren’t enough, though; it worked insofar as it moved snow from where it was to where it wasn’t, but didn’t really move it very far. To the casual observer, it seems like there’s just not enough weight to the machine, allowing it to ride up over the snow rather than scraping the driveway clean. Check out the video below and see what you think.

Now, we’re not picking on [Lucas] here. Far from it — we enjoyed this build as much as some of his other stuff, like his scratch-built CO2 laser tube and his potty-mouthed approach to Kaizen tool organization. We still think this one has a lot of potential, and we’re glad he vowed to continue working on it for next winter.

Continue reading “Fail Of The Week: The Little Remote-Controlled Snowblower That Couldn’t”