NASA is going back to the Moon! We’ll follow the crew of Artemis II every step of the way.

Continue reading “Following Artemis II’s Journey Around The Moon”

NASA is going back to the Moon! We’ll follow the crew of Artemis II every step of the way.

Continue reading “Following Artemis II’s Journey Around The Moon”

It’s easy to think of online console gaming as an invention of the 2000s. Microsoft made waves when Xbox Live dropped in 2002, with Nintendo and Sony scrambling to catch up with their own offerings that were neither as sleek or well-integrated.

However, if you were around a decade earlier, you might have experienced online console gaming much closer to the dawn of the Internet era. As far back as 1990, you could jump online with your Sega Mega Drive. But what did an online console feel like in the dial-up era?

Although generative language models have found little widespread, profitable adoption outside of putting artists out of work and giving tech companies an easy scapegoat for cutting staff, their their underlying technology remains a fascinating area of study. Stepping back to the more innocent time of the late 2010s, before the cultural backlash, we could examine these models in their early stages. Or, we could see how even older technology processes these types of machine learning algorithms in order to understand more about their fundamentals. [Damien Boureille] has put a 60s-era IBM as well as a PDP-11 to work training a transformer algorithm in order to take a closer look at it.

For such old hardware, the task [Damien Boureille] is training his transformer to do is to reverse a list of digits. This is a trivial problem for something like a Python program but much more difficult for a transformer. The model relies solely on self-attention and a residual connection. To fit within the 32KB memory limit of the PDP-11, it employs fixed-point arithmetic and lookup tables to replace computationally expensive functions. Training is optimized with hand-tuned learning rates and stochastic gradient descent, achieving 100% accuracy in 350 steps. In the real world, this means that he was able to get the training time down from hours or days to around five minutes.

Not only does a project like this help understand these tools, but it also goes a long way towards demonstrating that not every task needs a gigawatt datacenter to be useful. In fact, we’ve seen plenty of large language models and other generative AI running on computers no more powerful than an ESP32 or, if you need slightly more computing power, on consumer-grade PCs with or without GPUs.

Although the term ‘Iron Curtain’ from the Cold War brings to mind something like the Berlin Wall and its forbidding No Man’s Land, there was still active trade between the Soviet Union and the West. This included devices like the M4100/4 insulation tester that the [Three-phase] YouTube channel recently looked at, after previously poking at a 1967 USSR resistance bridge.

Although the term ‘Iron Curtain’ from the Cold War brings to mind something like the Berlin Wall and its forbidding No Man’s Land, there was still active trade between the Soviet Union and the West. This included devices like the M4100/4 insulation tester that the [Three-phase] YouTube channel recently looked at, after previously poking at a 1967 USSR resistance bridge.

This particular unit dates to 1985, and comes in a rather nice-looking case that somewhat looks like bakelite. It’s rated for up to 1 gigaohm, putting out 1,000 V by using the crank handle. Because of the pristine condition of the entire unit, including seals, it was decided to not look at the internals but only test its functionality.

After running through the basic usage of the insulation tester it’s hooked up to a range of testing devices, which shows that it seems to be mostly still in working condition. The first issue noticed was that the crank handle-based generator was a bit tired, so that it never quite hit the maximum voltage.

With no parallax correction and no known last calibration date, it still measured to about 10% of the actual value in some tests initially, but in later tests it was significantly off from the expected value. At this point the device was suspected of being faulty, but it defied being easily opened, so any repair will have to be put off for now. That said, it being in such good condition raises the prospect of it being an easy repair, hopefully in an upcoming video.

Continue reading “Testing A Soviet 1000 Volt Insulation Tester From 1985”

[Michel Jean] asked a question few others might: what exactly is going on under the hood of a classic HP scientific calculator when one presses the ∫ key? A numerical integration, sure, but how exactly? There are a number of useful algorithms that could be firing up when the integral button is pressed, and like any curious hacker [Michel] decided to personally verify what was happening.

[Michel] implemented different integration algorithms in C++ and experimentally compared them against HP calculator results. By setting up rigorous tests, [Michel] was able to conclude that the calculators definitely use Romberg-Kahan, developed by HP Mathematician William Kahan.

Selected by HP in 1979 for use in their scientific calculators, the Romberg-Kahan algorithm was kept in service for nearly a decade. Was it because the algorithm was fast and efficient? Not really. The reason it was chosen over others was on account of its robustness. Some methods are ridiculously fast and tremendously elegant at certain types of problem, but fall apart when applied to others. The Romberg-Kahan algorithm is the only one that never throws up its hands in failure; ideal for a general-purpose scientific calculator that knows only what its operator keys in, and not a lick more.

It’s a pretty neat fact about classic HP calculators, and an interesting bit of historical context for these machines. Should you wish for something a bit more tactile and don’t mind some DIY, it’s entirely possible to re-create old HP calculators as handhelds driven by modern microcontrollers, complete with 3D-printed cases.

Thanks to [Stephen Walters] for the tip!

During the 1990s the Chornobyl Nuclear Power Plant – formerly the Chernobyl NPP – continued operating with its remaining three RBMK reactors, but of course the 1970s-era automation with its very limited SKALA computer required some serious modernization. What was interesting here is that instead of just replacing this entire Soviet-era mainframe with a brand-new 1990s one, the engineers responsible opted to build a new system – called DIIS – around it. This is detailed in a recent video by the [Chornobyl Family] on YouTube.

This SKALA industrial control system was previously detailed in a video, covering this 24-bit mainframe computer and its many limitations. It wasn’t quite a real-time control system, but it basically did what it was designed to do. Since at the time it was not clear for how long these three RBMKs would be kept running, they didn’t want to go overboard with investments either.

Ultimately Unit 2 only was active until 1991 due to a turbine fire, Unit 1 until 1996 and Unit 3 was shutdown for the last time in 2000, so this a sensible decision. During those years, an auxiliary information-measurement system (DIIS) was the big upgrade, which got bridged into SKALA via a Ukrainian-made SM-1210 minicomputer, with the latter connected to an 80386 PC which itself was connected to an ARCnet hub.

Continue reading “How The Chornobyl NPP Got Modernized In The 1990s”

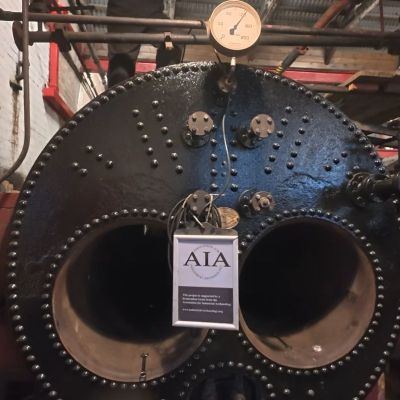

The Industrial Revolution was powered by steam, with boilers being a crucial part of each steam engine, yet also one of the most dangerous elements due to the high pressures involved. The five Lancashire boilers at the Claymills Pumping Station are relatively benign in this regard, as they operate at a mere 80 PSI unlike e.g. high-pressure steam locomotives that can push 200 – 300 PSI. This doesn’t mean that refurbishing one of these boilers is an easy task and doesn’t involve plugging a lot of leaks, as the volunteers at this pumping station found out.

The Industrial Revolution was powered by steam, with boilers being a crucial part of each steam engine, yet also one of the most dangerous elements due to the high pressures involved. The five Lancashire boilers at the Claymills Pumping Station are relatively benign in this regard, as they operate at a mere 80 PSI unlike e.g. high-pressure steam locomotives that can push 200 – 300 PSI. This doesn’t mean that refurbishing one of these boilers is an easy task and doesn’t involve plugging a lot of leaks, as the volunteers at this pumping station found out.

At this Victorian-era pumping station there are a total of five of these twin-flue Lancashire boilers, all about 90 years old after a 1930s-era replacement, with them all gradually being brought back into service. The subject of the video is boiler 1, which was last used in 1971 before the pumping station was decommissioned. Boilers 2 and 3 were known to be in a pretty bad condition, and they needed a replacement for boiler 5 as it was about to go down for maintenance soon.

Although the basic idea behind a Lancashire boiler is still to boil water to create steam, it’s engineered to do this as efficiently as possible to save fuel. This is why it has two flues where the burning coal deposits its thermal energy, which then goes on to heat the surrounding water. The resulting pressure from the steam also means that there are a lot of safeties to ensure that things do not get too spicy.

Continue reading “How To Restore Your 19th-Century Lancashire Boiler To Hold 120 PSI”

Although digital computers are – much like their human computer counterparts – about performing calculations, another crucial element is that of memory. After all, you need to fetch values from somewhere and store them afterwards. Sometimes values need to be stored for long periods of time, making memory one of the most important elements, yet also one of the most difficult ones. Back in the 1950s the storage options were especially limited, with a 1959 Bell Labs film reel that [Connections Museum] digitized running through the bleeding edge of 1950s storage technology.

After running through the basics of binary representation and the difference between sequential and random access methods, we’re first taking a look at punch cards, which can be read at a blistering 200 cards/minute, before moving onto punched tape, which comes in a variety of shapes to fit different applications.

Electromechanical storage in the form of relays are popular in e.g. telephone exchanges, as they’re very fast. These use two-out-of-five code to represent the phone numbers and corresponding five relay packs, allowing the crossbar switch to be properly configured.

Continue reading “Retrotechtacular: Bleeding-Edge Memory Devices Of 1959”