The early history of colour TV had several false starts, of which perhaps one of the most interesting might-have-beens was the CBS field-sequential system. This was a rival to the nascent system which would become NTSC, which instead of encoding red, green, and blue all at once for each pixel, made sequential frames carry them.

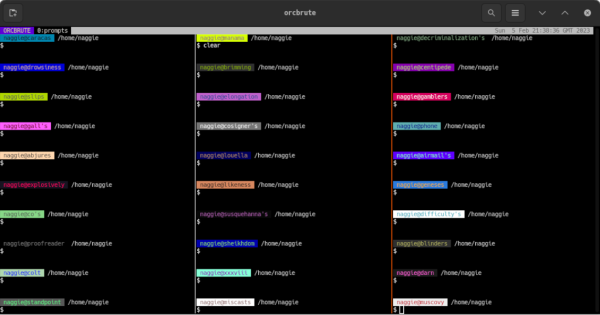

The Korean war stopped colour TV development for its duration in the early 1950s, and by the end of hostilities NTSC had matured into what we know today, so field-sequential colour became a historical footnote. But what if it had survived? [Nicole Express] takes into this alternative history, with a look at how a field-sequential 8-bit home computer might have worked.

The CBS system had a much higher line frequency in order to squeeze in those extra frames without lowering the overall frame rate, so given the clock speeds of the 8-bit era it rapidly becomes obvious that a field-sequential computer would be restricted to a lower pixel resolution than its NTSC cousin. The fantasy computer discussed leans heavily on the Apple II, and we explore in depth the clock scheme of that machine.

While it would have been possible with the faster memory chips of the day to achieve a higher resolution, the conclusion is that the processor itself wasn’t up to matching the required speed. So the field-sequential computer would end up with wide pixels. After a look at a Breakout clone and how a field-sequential Atari 2600 might have worked, there’s a conclusion that field-sequential 8-bit machines would not be as practical as their NTSC cousins. From where we’re sitting we’d expect them to have used dedicated field-sequential CRT controller chips to take away some of the heartache, but such fantasy silicon really is pushing the boundaries.

Meanwhile, while field-sequential broadcast TV never made it, we do have field-sequential TV here in 2026, in the form of DLP projectors. We’ve seen their spinning filter disks in a project or two.

1950 CBS color logo: Archive.org, CC0.